Difference between revisions of "AWS"

Adelo Vieira (talk | contribs) (→Use case - Fan Out) |

Adelo Vieira (talk | contribs) (→Communicating easily and reliably among components - Amazon Simple Queue Service (SQS)) |

||

| Line 523: | Line 523: | ||

<br /> | <br /> | ||

======Loose coupling with Amazon SQS - An example====== | ======Loose coupling with Amazon SQS - An example====== | ||

| − | |||

| − | |||

This example shows a queuing chain pattern that enables asynchronous processing. Asynchronous processing enables various processes to run at the same time. | This example shows a queuing chain pattern that enables asynchronous processing. Asynchronous processing enables various processes to run at the same time. | ||

| Line 564: | Line 562: | ||

<br /> | <br /> | ||

======Distributed queues propoerties====== | ======Distributed queues propoerties====== | ||

| − | |||

| − | |||

This diagram shows the short polling behavior of a message being returned after a system component makes a receive request. Amazon SQS samples several servers, shown in gray, and returns the messages from the servers—messages A, C, D, and B, in this example. Message E is not returned for this particular request, but it would be returned for a subsequent request. | This diagram shows the short polling behavior of a message being returned after a system component makes a receive request. Amazon SQS samples several servers, shown in gray, and returns the messages from the servers—messages A, C, D, and B, in this example. Message E is not returned for this particular request, but it would be returned for a subsequent request. | ||

Revision as of 08:35, 27 February 2026

This is a protected page.

- Overview of Amazon Web Services: https://d1.awsstatic.com/whitepapers/aws-overview.pdf

Contents

- 1 AWS Academy

- 2 AWS Training & Certification Portal

- 2.1 AWS Academy Cloud Foundations

- 2.2 AWS Academy Cloud Architecting

- 2.2.1 Module 0 - AWS in Review

- 2.2.2 Module 1 - Welcome to Academy Cloud Architecting

- 2.2.3 Module 2 - Designing Your Environment

- 2.2.4 Module 3 - Designing for High Availability - Part 1

- 2.2.5 Module 4 - Designing for High Availability - Part 2

- 2.2.6 Module 5 - Automating Your Infrastructure

- 2.2.7 Module 6 - Decoupling Your Infrastructure

- 2.2.7.1 Loose Coupling

- 2.2.7.2 Loose coupling Strategies

- 2.2.7.3 Communicating easily and reliably among components - Amazon Simple Queue Service (SQS)

- 2.2.7.3.1 Amazon Simple Queue Service - SQS

- 2.2.7.3.2 Loose coupling with Amazon SQS - An example

- 2.2.7.3.3 Amazon SQS Benefits

- 2.2.7.3.4 Distributed queues propoerties

- 2.2.7.3.5 Amazon SQS - Visibility timeout

- 2.2.7.3.6 Amazon SQS - Dead letter queue

- 2.2.7.3.7 Sharing a queue

- 2.2.7.3.8 Amazon SQS use cases

- 2.2.7.3.9 Amazon SNS Characteristics

- 2.2.7.3.10 Amazon SNS and SQS comparison

- 2.2.7.3.11 Use case - Fan Out

- 2.2.7.4 Communicating with loose coupling and Amazon DynamoDB

- 2.2.7.5 Amazon API Gateway

- 2.2.7.6 Serveless Architectures

- 2.2.7.7 Decoupling Examples

- 2.2.7.8 Module 6 - Decoupling Your Infrastructure - Assessment

- 2.2.8 Module 7 - Designing Web Scale Media

- 2.2.9 Module 14 - Troubleshooting

- 2.2.10 Module 15 - Design Patterns and Sample Architectures

- 3 Pillars of the AWS Well-Architected Framework

- 3.1 Module 9 - Well-Architected Pillar 1 - Operational Excellence

- 3.2 Module 10 - Well-Architected Pillar 2 - Security

- 3.3 Module 11 - Well-Architected Pillar 3 - Reliability

- 3.4 Module 12 - Well-Architected Pillar 4 - Performance Efficiency

- 3.5 Module 13 - Well-Architected Pillar 5 - Cost Optimization

- 4 Vocareum labs

- 4.1 ACA Module 3 LAB - Making Your Environment Highly Available

- 4.1.1 Task 1 - Inspect Your environment

- 4.1.2 Task 2 - Login to your Amazon EC2 instance

- 4.1.3 Task 3 - Install and Launch Your Web Server PHP Application

- 4.1.4 Task 4 - Create an Amazon Machine Image - AMI

- 4.1.5 Task 5 - Configure a Second Availability Zone

- 4.1.6 Task 6 - Create an Application Load Balancer

- 4.1.7 Task 7 - Create an Auto Scaling Group

- 4.1.8 Task 8 - Test the Application

- 4.1.9 Task 9 - Test High Availability

- 4.2 Lab - Introduction to Amazon CloudFront

- 4.3 Multi-region failover with Amazon Route 53

- 4.1 ACA Module 3 LAB - Making Your Environment Highly Available

- 5 Services and Important concepts

AWS Academy

File:AWS_Academy_on_Vocareum_and_VitalSource_Bookshelf.pdf

AWS Academy uses three (3) learning platforms to provide you with access to your learning resources. This guide shows you how to register on each platform and access the learning resources. There are three steps to registering on your class:

- Register in the AWS Training & Certification Portal

- Complete registration in Vocareum

- Complete registration in VitalSource Bookshelf

- AWS Starter Account: Students can open an AWS Starter Account that allows you to use some resources of the AWS platform for free and without the need of entering a credit card.

- AWS Training & Certification Port

The AWS Training & Certificaiton Portal is our Learning Mangement System. It hosts videos, eLearning modules, and knowledge assessments:

Resources available in this learning platforms:

- Video introductions

- Video console demos

- Narrated lectures

- Knowledge checks

Registering on Your Class:

- This is the general link: https://www.aws.training/SignIn

- We can Create an account normally. Creo que no requiere ninguna autorización especial.

- However, your educator could provide you with a registration link. This is the links provided in the course:

- Vocareum:

General link: https://labs.vocareum.com/home/login.php

Your educator could provide you with a registration link. Link provided for the course: https://labs.vocareum.com/home/login.php?code=&e=You%20are%20not%20logged%20in.%20Please%20login

- A***2

Resources available in this learning platforms:

- Lab exercises

- Projects

- Activities

- VitalSource BookShelf:

https://bookshelf.vitalsource.com/

This is the link provided by my educator: http://aws-academy-cctcollegedublin.vstbridge.com

We also create a VitalSource account normally. Creo que no requiere ninguna autorización especial; aunque la guía dice "Your educator will register you in VitalSource Bookshelf"

Resources available in this learning platforms:

- Student materials

- Free accounts:

- AWS Free Tier: https://aws.amazon.com/free

- Al abrir una cuenta en AWS se inicia el AWS Free Tier por un año. AWS Free Tier permite tener acceso gratuito a un conjunto de recursos (no todo y no ilimitado) por un año.

- AWS Educate - AWS Starter Account:

- Link to login: https://www.awseducate.com/student/s/awssite

- A***2

- https://www.awseducate.com

- https://www.awseducate.com/registration#APP_TYPE

- Además de la opción AWS Free Tier (la cual es para cualquier cuenta), AWS también ofrece cuentas especiales para Estudiantes o personas en el medio educativo. Este tipo de cuentas también permite tener acceso gratuito a un conjunto de recursos (no todo y no ilimitado) por el periodo de duración de los estudios. Para abrirla hay que demostrar que eres estudiante. La forma más fácil es teniendo un email personal con el dominio de la institución educativa (adelo@cct.ie, por ejemplo). Si no se cuenta con un email de este tipo también es posible probar de otras formas que eres estudiante (you need to follow the AWS procedure and you should be granted a Starter Account.) contactacdo el AWS Educate at https://aws.amazon.com/education/awseducate/contact-us/

AWS Training & Certification Portal

There are 2 courses from the AWS Training & Certification Portal that I've been working on:

- AWS Academy Cloud Foundations

- AWS Academy Cloud Architecting

AWS Academy Cloud Foundations

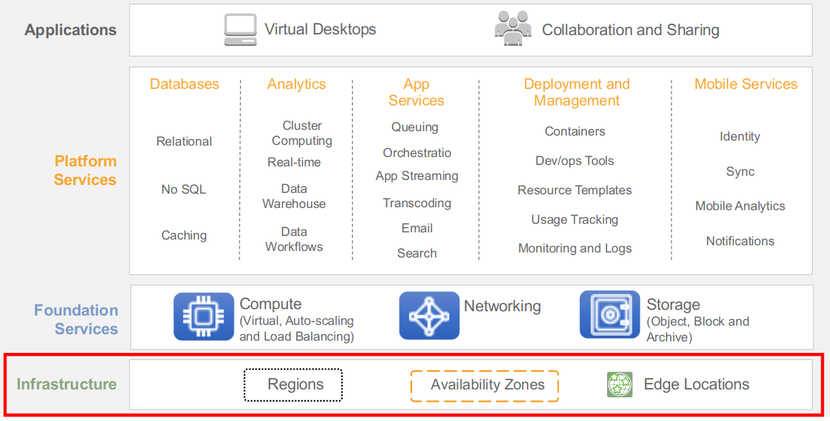

AWS Global Infrastructure

AWS Global infrastructure can be brooked down into three elements:

- Regions

- Availability zones

- Edge Locations

- AWS Regions:

- An AWS Region is a geographical area. Each Region is made up of two or more Availability Zones.

- AWS has 18 Regions worldwide.

- You enable and control data replication across Regions.

- Communication between Regions uses AWS backbone network connections infrastructure.

- AWS Availability Zones:

- Each Availability Zone is:

- Made up of one or more data centers.

- Designed for fault isolation.

- Interconnected with other Availability Zones using high-speed private links.

- Each Availability Zone is:

- You choose your availability zones. AWS recommends replicating across Availability Zones for resiliency.

- AWS Edge Locations:

- An Edge Location is where users access AWS services.

- It is a global network of 114 points of presence (103 Edge Locations and 11 regional Edge Caches) in 56 cities across 24 countries.

- Specifically used with Amazon CloudFront, a Global Content Delivery Network (CDN), to deliver content to end users with reduced latency.

- Regional edge caches used for content with infrequent access.

- AWS Infrastructure Features:

- Elastic and Scalable:

- Elastic infrastructure; dynamic adaption of capacity.

- Scalable infrastructure; adapts to accommodate growth.

- Fault-tolerant:

- Continues operating properly in the presence of a failure.

- Built-in redundancy of components.

- High availability:

- High level of operational performance

- Minimized downtime

- No human intervention

AWS Core Services

AWS Foundational/Core Services

AWS has a lot of services, but some of them, provides the foundation for all solutions; we refer to those as the Core Services.

Compute

https://www.youtube.com/watch?v=kogSnxk-5t0

- Amazon Elastic Compute Cloud (EC2)

- EC2 is essentially a computer in the cloud. EC2 is a Web Service that provides compute capacity in the cloud (Virtual computing environment in the cloud).

- EC2 allows you to create and configure virtual compute capacity.

- Elastic refers tot the fact that if properly configured, you can increase or decrease the amount of servers required by an application automatically, according to the current demands on that application.

- Compute refers to the compute, or server resources that are being presented.

- AWS Lambda:

- AWS Lambda is an event-driven, serverless computing platform. It is a computing service that runs code in response to events and automatically manages the computing resources required by that code.

- AWS Lambda lets you run code without provisioning or managing servers. You pay only for the compute time you consume - there is no charge when your code is not running.

- The purpose of Lambda, as compared to AWS EC2, is to simplify building smaller, on-demand applications that are responsive to events and new information. AWS targets starting a Lambda instance within milliseconds of an event. Node.js, Python, Java, Go[2], Ruby[3] and C# (through .NET Core) are all officially supported as of 2018. In late 2018, custom runtime support[4] was added to AWS Lambda giving developers the ability to run a Lambda in the language of their choice.

- Auto Scaling:

- Scales EC2 capacity as needed

- Improves availability

- AWS Elastic Beanstalk:

- AWS Elastic Beanstalk is an easy-to-use service for deploying and scaling web applications and services developed with Java, .NET, PHP, Node.js, Python, Ruby, Go, and Docker on familiar servers such as Apache, Nginx, Passenger, and IIS.

- You can simply upload your code and Elastic Beanstalk automatically handles the deployment, from capacity provisioning, load balancing, auto-scaling to application health monitoring.

Networking

- VPC

- Elastic Load Balancer:

- Elastic Load Balancing automatically distributes incoming application traffic across multiple targets, such as Amazon EC2 instances, containers, IP addresses, and Lambda functions. It can handle the varying load of your application traffic in a single Availability Zone or across multiple Availability Zones.

- Route 53:

Storage

- There are three broad categories of storage:

- Instance storage: Temporary storage that is added to your Amazon EC2 instance

- Amazon Elastic Block Store (Amazon EBS): It is persistent, mountable storage, which can be mounted as a device to an Amazon EC2 instance. Amazon EBS can only be mounted to an Amazon EC2 instance within the same Availability zone.

- Each Amazon EBS volume is automatically replicated within its Availability Zone to protect you from component failure, offering high availability.

- Uses:

- Boot volumes and storage for Amazon EC2 instances

- Data Storage with a file system

- Database hosts

- Enterprise applications.

- Volume types:

- Solid-State Drives (SSD)

- Hard Disk Drives (HDD)

- Amazon Simple Storage Service (Amazon S3): Similar to Amazon EBS. It is persistent storage; however, it can be accessed from anywhere.

- Object level storage

- Amazon S3 con even be configured to support corss-region replication such that data put into an Amazon S3 bucket in one region can be automatically replicated to another Amazon S3 Region.

- Uses:

- Storing application assets

- Static web hosting

- Backup and Disaster Recovery (DR)

- Staging area for big data

- Another Storage options:

- Amazon Elastic File System (Amazon EFS)

- Provides simple, scalable, elastic file storage for use with AWS services and on-premises resources. It is easy to use and offers a simple interface that allows you to create and configure file systems quickly and easyly.

- Amazon EFS is build to elastically scale on demand withou disrupting applications, growing and shrinking automatically as you add and remove files, so your applications have the storage they need, when they need it.

- Amazon Glacier:

- Amazon Glacier is a secure, durable, and extremely low-cost cloud storage service for data archiving and long-term backup.

- Data stored in Amazon Glacier takes several hours to retrieve, which is why it's ideal for archiving.

- Amazon Relational Database Service (RDS)

- Amazon DynamoDB

- Amazon Redshift

- Amazon Aurora

Block vs Object Storage

What if you want to change one character in a 1 GB file?:

- With block storage, you only need to change the block that contains the character.

- With object storage, the entire file must be updated.

This difference has a mejor impact on the throughput latency, and cost of your storage solution. Block storage solutions are typically faster and use less bandwidth, but cost more than object-level storage.

AWS Academy Cloud Architecting

https://www.aws.training/SignIn

https://www.aws.training/Details/Curriculum?transcriptid=1zeJo6HNnUC1res7bz-WRA2&id=37928#modules

Here, in the AWS Training & Certification Portal, los módulos se presentan en un formato de vídeo que me parece que no es muy apropiado para leer y mucho menos para buscar información porque se tiene que ir pasando cada uno de los videos que conforman el módulo; y no se puede descargar un PDF con todo el contenido. Sin embargo, cada uno de los módulos presentado in the AWS Training & Certification Portal, se encuentra también con otro formato en VitalSource BookShelf. Este formato es mucho mejor que el formato de videos de AWS Academy Cloud Architecting. Este formato de VitalSource BookShelf sí puede ser descargado como PDF pero la descarga resulta en una imagen sin opciones de búsqueda (así que no es para nada útil). This is the «VitalSource BookShelf» link provided by my educator: http://aws-academy-cctcollegedublin.vstbridge.com

Module 0 - AWS in Review

- VitalSource link: https://aws-academy-cctcollegedublin.vstbridge.com/#/reader/200-ACACAD-11-EN-M0SG?context_token=b32272e0-5a1f-0138-3176-6eaf1e728f01

Module 1 - Welcome to Academy Cloud Architecting

Module 2 - Designing Your Environment

Slides 4-18, 30-75,94-96

- VitalSource link: https://aws-academy-cctcollegedublin.vstbridge.com/#/reader/200-ACACAD-11-EN-M2SG?context_token=728fb310-5a1f-0138-5c79-62a35fcfb0e5

Module 2 - Designing Your Environment - Assessment

Module 3 - Designing for High Availability - Part 1

Slides 4-50, 56-69

- VitalSource link: https://aws-academy-cctcollegedublin.vstbridge.com/#/reader/200-ACACAD-11-EN-M3SG?context_token=49938f30-5a1e-0138-466b-46285ee08913

High availability is about ensuring that your application's downtime is minimized as mucho as posssible.

Availability refers to the amount of time your system is in a functioning condition. In general terms, your availability is referred to as 100% minus your system's downtime.

Module 3 - Designing for High Availability - Part 1 - Assessment

Module 4 - Designing for High Availability - Part 2

Slides 4-45, 49-68

- VitalSource link: https://aws-academy-cctcollegedublin.vstbridge.com/#/reader/200-ACACAD-11-EN-M4SG?context_token=df6c04a0-5a1e-0138-3176-6eaf1e728f01

Module 4 - Designing for High Availability - Part 2 Assessment

Module 5 - Automating Your Infrastructure

Slides: 4-21

- VitalSource link: https://aws-academy-cctcollegedublin.vstbridge.com/#/reader/200-ACACAD-11-EN-M5SG?context_token=ef667a70-5a1e-0138-d9f2-7210a2c4c62a

Infrastructure as code

Infrastructure as code is the process of applying techniques, practices, and tools from software development to create reusable, maintainable, extensible, and testable infrastructure.

With AWS CloudFormation, you can treat infrastructure as code. AWS CloudFormation allows you to create templates that can be used to launch, configure, and connect AWS resources with JavaScript Object Notation (JSON) or YAML-formatted templates.

An AWS CloudFormation template allows you to:

- Treat it as code, and manage it by using your choice of version control, such as Git or Subversion.

- Define an entire application stack-which is all the resources required for your application—in a JSON template file.

- Define runtime parameters for a template, such as Amazon Elastic Compute Cloud—or Amazon EC2—Instance Size, Amazon EC2 Key Pair, etc.

You can now create YAML-formatted templates to describe your AWS resources and properties in AWS CloudFormation. You can use either YAML-formatted templates or JSON-formatted templates to model and describe the resources and properties in your AWS infrastructure. Both YAML-formatted and JSON-formatted AWS CloudFormation templates have the same structure, and they both support all the same features. You can now also create cross stack references that let you share outputs from one stack with another stack. This feature lets you share things like AWS Identity and Access Management—or IAM—roles, virtual private cloud—or VPC—information, and security groups. Previously, you needed to use AWS CloudFormation custom resources to accomplish these tasks. Now, you can export values from one stack and import them to another stack by using the new ImportValue intrinsic function.

Cross-stack references are useful for customers who separate their AWS infrastructure into logical components that grouped by stack—such as a network stack, an application stack, etc.—and who need a way to loosely couple stacks together as an alternative to nested stacks.

Ways to work with AWS CloudFormation Templates

- Simple JSON or JAML text editor

- CloudFormation Designer

- Is available via the AWS Management Console

- Let you drag and drop resources onto a design area to automatically generate a JSON-formatted or YAML-formatted CloudFormation template.

Organizing your AWS CloudFormation Templates

- At a minimum: Separate network resources, security resources, and application resources into their own templates.

- Avoid sharing a single template across applications for resources of the same type unless you are deliberately centralizing control of that resource type.

AWS CloudFormation Templates

https://docs.aws.amazon.com/AWSCloudFormation/latest/UserGuide/gettingstarted.templatebasics.html

Module 5 - Automating Your Infrastructure - Assessment

Module 6 - Decoupling Your Infrastructure

Slides 4-27, 28-34, 38-43

Slides 4-27, 35-43, 47-52

- VitalSource link: https://aws-academy-cctcollegedublin.vstbridge.com/#/reader/200-ACACAD-11-EN-M6SG?context_token=059b22a0-5a1f-0138-0cca-0e37da064d4d

Loose Coupling

Loose coupling refers to designing architectures with interdependent components. This reduce interdependencies, so a change or failure of one component does not affect other components. With loose coupling, you leverage managed solutions as intermediaries between layers of your system. This way, failures, and scaling of a component or layer are automatically handled by the intermediary.

Tow primary solutions for decoupling components are Load balancers and Message queues.

Example:

In the diagram, the solution on the left illustrates a collection of tightly coupled web and app servers. If one of the app servers goes down, each connection attempt between a web server and the unhealthy app server will cause an access error.

The solution on the left can be decoupled using load balancing. The solution on the right shows a load balancer-in this case, an Elastic Load Balancing instance-that routes requests between the web servers and the app servers. if one of the app serves goes down, the load balancer automatically directs all traffic to the healthy servers, and the infrastructure is not impacted.

As demonstrated in the figure, loose coupling simplifies the process of scaling. If you're using a distributed system at multiple tiers, a managed load balancer or queuing system provides a single endpoint where traffic can go and be retrieved.

Loose coupling also enables your environment to be more fault-tolerant, which is a key to high availability. With a loosely coupled distributed system, the failure of one component can be managed between tiers, so the fault does not spread beyond a single instance.

Loose coupling Strategies

Design Services, Not Servers

Leverage the breadth of AWS services; don't limit yoour infrastructur to serveres.

Serverless solutions and managed services offered by AWS can solve many needs without having to provision, configure, and manage an entire Amazon EC2 instance. Solutions like AWS Lambda, Amazon Simple Queue Service, Amazon DynamoDB, Elastic Load Balancing, Amazon Simple Email Service, and Amazon Cognito can replace server-based solutions, at a lower cost, with managed solutions that have a lower profile and are more performant.

Service-oriented architecture - SOA

An SOA is an architectural approach in which application components provide services to other components via a communications protocol. Services are self-contained units of functionality that can function regardless of the operating system, language, or location used by the service.

In general, a service-oriented architecture makes application modules available in a way that makes implementation details irrelevant. Application users are no longer concerned with implementation details like operating system, language, or location. Services are available via an endpoint.

The goal of using an SOA is to loosen the coupling of an application's services.

Microservices

- Microservices are small, fine-grained, independent processes in a services-oriented architecture.

- Each process focuses on completing one small task.

- Processes communicate to each other using language-agnostic APIs that leverage JSON or REST.

Microservices are minimal. To some people, minimal means "small enough to be rewritten in a few days;" to others, minimal means "it does one task and does it right." Minimal can also mean "as small as possible while adding business value." Ultimately, minimal is defined by each organization as "what makes the most sense."

The clear advantage of microservices is that you have a smaller surface area of code to maintain. Microservices should be complete. You can build with no external dependencies; all prerequisites are included in microservices, which enables loose coupling.

Advantages of Micorservices

- Split features into individual components.

- Have smaller parts to iterate on.

- have a reduced test surface area.

- Benefit from a lower risk due to change.

- Use individual horizontally scalable parts.

Comparing architectural styles

Traditional application architectures are monolithic—all components of the system are tightly coupled together. For example, the architecture on the left illustrates a traditional monolithic architecture, with a database containing inventory, statistics, orders, shopping cart contents, and customer suggestions.

In contrast, microservices-based architectures treat components as independent services connected together in a loosely coupled manner, as illustrated in the architecture shown on the right. This architecture implements more than one database for orders, uses multiple tables, and includes multiple backups of the inventory database. The cart, statistics, and suggestions are separate, so they can be easily scaled. With this microservices structure, if any part of the site goes down, the site can survive and continue to serve customers.

Communicating easily and reliably among components - Amazon Simple Queue Service (SQS)

This section will focus on Amazon Simple Queue Service (SQS) to decouple an infrastructure.

Amazon Simple Queue Service - SQS

Amazon Simple Queue Service, or SQS, is a fully managed message queueing service. Messages are generated by one component for consumption by another and can contain 256 KB of text in any format.

The Amazon SQS queue can support multiple readers and writers on the same queue. The queues are the repository for messages awaiting processing. They wait for messages and act as a buffer between senders and receivers.

This points to a key difference between Amazon SQS and Amazon Simple Notification Service, or SNS. Amazon SQS is pull, while Amazon SNS is push.

Loose coupling with Amazon SQS - An example

This example shows a queuing chain pattern that enables asynchronous processing. Asynchronous processing enables various processes to run at the same time.

An example of image processing is the sequential operations of uploading, storing, and encoding the image, creating a thumbnail, and copyrighting are tightly linked to each other. This tight linkage complicates the recovery operations when a failure occurs.

In this diagram, you can see an Amazon EC2 automatic scaling group that completes six processes. To begin, it puts a message in the first queue. The message stays in the queue until the second group executes the get message, which pulls it out and processes the job. When the job is done, a put message is sent to the next queue, and the processed message is deleted. A get message is then sent to the third set of EC2 servers.

You can achieve loose coupling of systems by using queues between systems and exchanging messages that transfer jobs. This enables asynchronous linking of systems. This method lets you increase the number of virtual servers that receive and process the messages in parallel. If there is no image to process, you can configure Auto Scaling to terminate the excess servers.

While you can use this pattern without cloud technology, the queue itself is provided as an AWS Cloud service called Amazon SQS, which makes using this pattern easier.

Some benefits are to:

- Use asynchronous processing to return responses quickly.

- Structure the system through loose coupling of Amazon EC2 instances.

- Handle performance and service requirements by merely increasing or decreasing the number of Amazon EC2 instances used in job processing.

- If an Amazon EC2 instance fails, a message remains in the queue service, which enables processing to continue immediately when the Amazon EC2 instance recovers. This produces a system that is robust to failure.

Queuing chain pattern is one of the cloud design patterns. Select the link to learn more. http://en.clouddesignpattern.org/index.php/CDP:Queuing_Chain_Pattern

Amazon SQS Benefits

- Scalable:

- Potentially millions of messages

- Reliable:

- All messages are stored redundantly on multiple servers and in multiple data centers

- Simultaneous read/write

- Secure:

- API credentials are needed

Distributed queues propoerties

This diagram shows the short polling behavior of a message being returned after a system component makes a receive request. Amazon SQS samples several servers, shown in gray, and returns the messages from the servers—messages A, C, D, and B, in this example. Message E is not returned for this particular request, but it would be returned for a subsequent request.

Message Order

Amazon SQS makes a best effort to preserve the order of messages, but because of the distributed nature of the queue, you might not receive messages in the exact order you sent them. If your system requires the order to be preserved, you should place sequencing information in each message, so you can reorder the messages on receipt.

At-Least-Once Delivery

Amazon SQS stores copies of your message on multiple servers for redundancy and high availability. On rare occasions, one of the servers storing a copy of a message might be unavailable when you receive or delete a message. If that occurs, the copy of the message will not be deleted on the unavailable server, and you might get that message copy again when you receive messages. Because of this, you must design your application to be idempotent—in other words, it must not be adversely affected if it processes the same message more than once.

Message Sample

How messages are retrieved from the queue depends on whether you are using short, or standard, polling, which is the default behavior, or long polling. When you retrieve messages from the queue with short polling, Amazon SQS samples a subset of the servers, based on a weighted, random distribution. Then, it returns messages from just those servers. This means that a particular receive request might not return all your messages. Or, if you have a small number of messages in your queue, such as less than 1,000, a particular request might not return any of your messages, whereas a subsequent request will. If you keep retrieving from your queues, Amazon SQS will sample all the servers, and you will receive all your messages.

Amazon SQS - Visibility timeout

Visibility timeout prevents multiple components from processing the same message. Visibility timeout is the period of time in which a message is invisible to the rest of the application after an application component gets the message from the queue.

When a message is received, it is locked while being processed. This prevents it from being processed by other components. The component that receives the message processes it and then deletes it from the queue. If the message processing fails, the lock expires, and the message is available again. This protects the message to ensure it is always processed. For example, if an instance fails and the lock never times out, the message stays invisible. The timeout enables the message to become unlocked, and visible, so it can be processed by another instance. When the application needs more time for processing, the "invisible" timeout can be changed dynamically via the ChangeMessageVisibility operation.

Amazon SQS - Dead letter queue

A dead letter queue, or DLQ, is a queue of messages that could not be processed. A DLQ receives messages after a maximum number of processing attempts is reached. It provides the ability to sideline and isolate messages that cannot be processed for analysis out of band. A DLQ restores message flow when FIFO is enabled and a message cannot be processed.

A dead letter queue is like any other Amazon SQS queue. Messages can be sent to it and received from it. You can create a DLQ from the SQS API and the SQS console.

Sharing a queue

- Queues can be shared with other AWS accounts and anonymously. The owner of the queue pays for shared queue access.

- A permission gives access to another person to use your queue in a particular way. A developer associates an access policy statement, which specifies the permissions being granted, with the queue to be shared. A policy is the actual document that contains the permissions you granted.

- Amazon SQS in each Region is independent in message stores and queue names; therefore, the messages cannot be shared between queues in different Regions.

- Amazon SQS provides APIs to create and manage the access policy statements, which are AddPermission, RemovePermission, SetQueueAttributes, and GetQueueAttributes. Refer to the latest API specification for more details.

Amazon SQS use cases

Amazon SQS use cases include work queues, buffering batch operations, request offloading, fan-out, and Auto Scaling.

- Work Queues: For Work Queues, decouple components of a distributed application that might not process the same amount of work simultaneously.

- Buffering Batch Operations: By Buffering Batch Operations, you can add scalability and reliability to your architecture, and smooth out temporary volume spikes without losing messages or increasing latency.

- Request Offloading: For Request Offloading, move slow operations off interactive request paths by enqueueing the request.

- Fan-out: To use Fan-out, combine Amazon SQS with Amazon SNS to send identical copies of a message to multiple queues in parallel, for simultaneous processing.

- Auto Scaling: To benefit from Auto Scaling, use Amazon SQS queues to determine the load on an application, and then combine with Auto Scaling to scale the number of Amazon EC2 instances out or in, depending on the traffic volume.

Amazon SNS Characteristics

- Single published message

- Order is not guaranteed or relevant

- No recall

- HTTP/HTTPS retry

- 256 KB max per message

Amazon SNS and SQS comparison

Amazon SQS and Amazon SNS are both AWS messaging services. You should know the differences between them.

One key difference is that Amazon SNS does not offer message persistence. This is why using Amazon SQS with SNS is important. If you are using a fan-out case, Amazon SQS provides a place where the message will be caught or processed, because SQS is always available. Due to the invisibility timeout and locks, Amazon SQS has message persistence, and messages are guaranteed to be processed.

As far as delivery differences, Amazon SNS allows applications to send time-critical messages to multiple subscribers through a push mechanism. Amazon SQS exchanges messages through a polling model—sending and receiving components are decoupled.

When comparing producer and consumer differences, Amazon SNS uses a publish and subscribe mechanism, while Amazon SQS is a send and receive model.

In addition, the SNS distribution model is one to many, and the Amazon SQS distribution model is one to one. Amazon SQS provides flexibility for distributed components of applications to send and receive messages, without requiring each component to be concurrently available.

Use case - Fan Out

Now consider another use case for fan-out. This scenario involves a mobile phone uploading a cute kitten picture. There is an SNS topic for the kitten picture. Once the upload is complete, the kitten picture is sent to three SQS queues:

- One to generate a thumbnail

- One to size the image for mobile, and

- One to size the image for the web In this scenario, the SNS and SQS work together to produce the fan-out. It is the same message but it has three sets of instructions for one image.

Amazon SNS works closely with Amazon SQS. Both services provide different benefits for developers. Amazon SNS allows applications to send time-critical messages to multiple subscribers through a “push” mechanism, which eliminates the need to periodically check or “poll” for updates. Amazon SQS is a message queue service used by distributed applications to exchange messages through a polling model and can be used to decouple sending and receiving components without requiring each component to be concurrently available. By using Amazon SNS and Amazon SQS together, messages can be delivered to applications that require immediate notification of an event and also persisted in an Amazon SQS queue for other applications to process at a later time.

Communicating with loose coupling and Amazon DynamoDB

Amazon API Gateway

Serveless Architectures

Decoupling Examples

Module 6 - Decoupling Your Infrastructure - Assessment

Module 7 - Designing Web Scale Media

Slides: 4-14,20-41,51-63

- VitalSource link: https://aws-academy-cctcollegedublin.vstbridge.com/#/reader/200-ACACAD-11-EN-M7SG?context_token=3291a610-5a1f-0138-d9f2-7210a2c4c62a

Web-based applications must be available, and they shouldn't take long to load. If your applications are unavailable, it could lead to revenue loss. It could also affect customer loyalty and brand image.

Let’s take a moment to review Amazon.com and consider where we might deliver content:

- In the address bar, you can note that the application is transported over https://, or port 443. This tells you that Amazon.com uses Secure Sockets Layer or SSL.

- You can then note that the application content is dynamic because it's personalized for Chris, which tells you that it has zero time to live or TTL.

- Time To Live (TTL) serves to tell the recursive server or local resolver how long it should keep the record in its cache. The longer the TTL, the longer the resolver holds that information in its cache. The shorter the TTL, the shorter amount of time the resolver holds that information in its cache.

- You can also search for items by entering user input. The static images can be cached and stored in Amazon S3.

- With the caching and acceleration technology of Amazon CloudFront, we can deliver all of your content, including static images, videos, and content based on user input.

- How should web-accessible content be stored? Let’s discuss how to store content in Amazon S3.

Part 1 - Storing Web-Accessible Content with Amazon S3

Static assets-content that isn't going change, such as text, images, and video content can be stored in Amazon S3.

Problems: Let’s consider a pattern where an Amazon EC2 instance is being used to serve static images and videos, which is not a proper use for that Amazon EC2 instance. Some of the problems are:

- Delivery of large files from a web server can become a problem in terms of network latency.

- User-generated content needs to be distributed across all your web servers.

Solution: You can store static asset files (images, videos, CSS) in an Amazon S3 bucket, and serve the files from there.

- You know that Amazon S3 will be durable and that it will serve content quickly.

- Amazon S3 performed backups in at least three different data centers for extremely high durability.

- Data that is stored in an Amazon S3 bucket is replicated across multiple data centers in a geographical region.

- When you use Amazon S3 to store static content, you don’t need to worry about scaling out an Amazon EC2 instance to serve videos and images.

- The use of Amazon S3 eliminates the need to worry about network loads and data capacity on your web servers.

- Objects that are stored in Amazon S3 can be accessed directly by users if it is enabled for public web hosting

- You can also use Amazon CloudFront, which is a content delivery network (CDN) that can be used as a global caching layer in front of Amazon S3 to accelerate content to your end-users.

Choosing a Region for Amazon S3

It is important to carefully select the AWS Region where you store an object because response latencies grow when requests have to travel long distances over the internet.

So, when choosing a region for Amazon S3 you must consider:

- Geographical proximity to your users.

- You should also collocate it with your Amazon EC2 instances, and with other AWS services that upload and download bucket objects.

- Collocating all of your relevant AWS services and Amazon S3 buckets in the same Region can help reduce costs and latencies.

Part 2 - Caching with Amazon CloudFront

Caching is the process of temporarily storing data or files in an intermediary location between the requester and the permanent storage. The purpose of caching is to make responding to future requests faster and reduce network throughput.

It is an architectural best practice is to implement caching at multiple layers of an architecture to reduce cost and latency, and to increase the performance of applications. It is more cost-effective to distribute files from CloudFront than from an Amazon S3 bucket.

CloudFront is a content delivery network (CDN) that delivers/distribute cached content (static and dynamic web content such as HTML files, CSS files, JavaScript files, and image files) through a worldwide network of data centers that are called edge locations.

Using CloudFront you can ensure that your content is close to users around the world. This geographic proximity means lower latency when you serve content to users. This makes it more cost-effective and faster for better user experience, as well as putting less stress on your core infrastructure.

CloudFront is particularly important when your application will deliver heavy static content (videos, images) to users around the world.

For instance, in the anti-pattern that is shown on the slide, your Amazon S3 bucket does not use a caching service. Three users request a file from one of your Amazon S3 buckets, one at a time. The file is delivered to each user in the same way. The result is that each request takes the same amount of time to complete. This process also incurs costs for the three separate times that the file is delivered for each request.

Let’s compare the process in the anti-pattern with a better pattern. In the best practice pattern, your infrastructure places Amazon CloudFront, which offers caching, in front of Amazon S3. In this scenario, the first request checks for the file in CloudFront. If the request does not find the file in CloudFront, it pulls the file from Amazon S3, and stores a copy of the file in CloudFront at the edge location that is closest to the user. The request then sends a copy of the file to the user who made the request. Now, when any other users request that file, it's retrieved from the closer edge location in CloudFront. The request does not have to go to Amazon S3 to get the file.

This slide shows another way to use Amazon CloudFront. In general, you only cache static content. However, dynamic or unique content affects the performance of your application. Depending on the demand, you might still get some performance gain by caching the dynamic or unique content in Amazon S3.

For example, if there’s something that needs to be personalized, the time to live is zero. A time to live of zero tells CloudFront that each and every time it sees the dynamic content, it needs to go back to its origin because it will change a lot.

At the same time, the time to live can be set to 5 minutes or 24 hours, depending on how long the content is good for. CloudFront can pull content from Amazon S3 so that it can save the load back to an on-premises data center. When you save a load, you can have smaller instances and save money, and resources can perform more efficiently.

A cloud architecture for web hosting

This architecture diagram shows how you can use CloudFront in front of your hosting architecture to decrease the number of times CloudFront must redirect requests to the load balancer, and pull content.

Let’s take a look:

- We have our Elastic Load Balancing load balancers in place with CloudFront.

- End users are directed to CloudFront via Amazon Route 53.

- The load balancers pull content and data from the Amazon S3 bucket, Amazon RDS, or Amazon ElastiCache. This can serve as a read replica if the content is cached there.

How to enable CloudFront

- First, you specify origin servers, like an Amazon S3 bucket or your own HTTP server. CloudFront gets your files from the origin servers, and the files will then be distributed from CloudFront edge locations all over the world.

- Second, you upload your files to your origin servers. Your files, which are also known as objects, typically include webpages, images, and media files.

- Third, you create a CloudFront distribution, which tells CloudFront which origin servers to get your files from when users request the files through your website or application. At the same time, you specify details, such as whether you want CloudFront to log all requests, and whether you want the distribution to be enabled as soon as it's created.

- Fourth, CloudFront assigns a domain name to your new distribution. You can see the domain name in the CloudFront console. The domain name can also be returned in the response to a programmatic request, like a request from an application programming interface, or API.

- Fifth, CloudFront sends your distribution's configuration (but not your content) to all of its edge locations.

Part 3 - Managing NoSQL databases

We'll learn about the features of managing NoSQL databases, or DBs.

Part 4 - Storing relational data in Amazon RDS

https://aws.amazon.com/rds/?nc1=h_ls

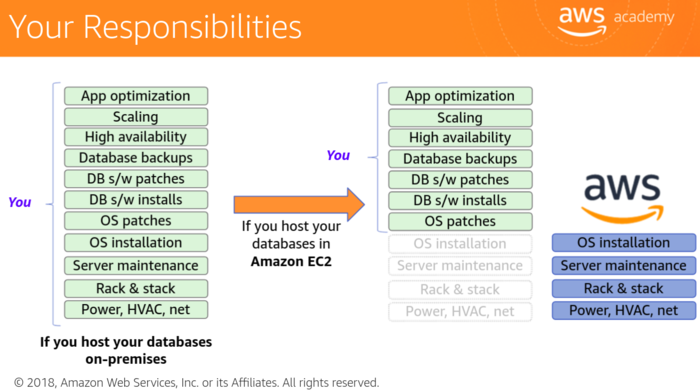

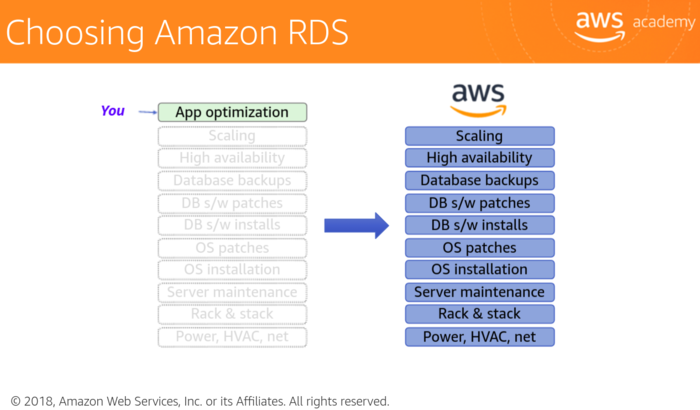

Amazon RDS is a good option for developers because it allows developers to focus on innovation, such as query construction and optimization.

Using Amazon RDS lets you offload the following operational burdens responsibilities:

- Migration

- Backup and recovery

- Patches

- Software upgrades

- Storage upgrades

- Frequent server upgrades

- And hardware crashe

What about security for Amazon RDS?:

- You can control an Amazon RDS database instance by enabling security for Amazon RDS.

- If you enable security, the backups or snapshots of your data that you create will automatically be encrypted.

- Encrypted instances are currently available for all database engines that are supported in Amazon RDS.

- Use AWS Identity and Access Management (IAM) to control which Amazon RDS operations each individual user can call.

- You can encrypt connections between your application and your DB instance by using SSL/TLS.

- Transparent Data Encryption (TDE) is supported for SQL Server and Oracle.

- You can receive notifications of important events that occur on your Amazon RDS instance.

Amazon RDS database engines

- Amazon Aurora

- Amazon RDS for PostgreSQL

- Amazon RDS for MySQL

- Amazon RDS for MariaDB

- Amazon RDS for Oracle

- Amazon RDS for Microsoft SQL Server

Amazon Aurora

https://aws.amazon.com/rds/aurora/?nc1=h_ls

Amazon Aurora is a relational database that is delivered by using service-oriented architectures.

- It is similar to how Amazon DynamoDB is a managed version of an Amazon EC2-hosted NoSQL engine.

- It is scalable and highly available, and it uses Amazon S3.

- It has drop-in compatibility with MySQL v5.6.

- Existing applications "just work."

- Amazon RDS customers that use MySQL can select options for migration.

- Amazon EC2 or on-premises MySQL users can import their data file.

- And it is compatible with PostgreSQL.

Module 7 - Designing Web Scale Media - Assessment

Module 14 - Troubleshooting

Slides 4-14

Module 15 - Design Patterns and Sample Architectures

Slides 4-11

Pillars of the AWS Well-Architected Framework

- Operational Excellence

- Security

- Reliability

- Performance Efficiency

- Cost Optimization

Module 9 - Well-Architected Pillar 1 - Operational Excellence

The ability to run and monitor systems to deliver business value and to continually improve supporting processes and procedures.

The three best practices areas for operational excellence in the cloud are to prepare, to operate, and to evolve:

- Prepare It's required to drive operational excellence:

- Key service:

- AWS Config and AWS Config Rules can be used to create standards for workloads and to determine whether environments are compliant with those standards before they are put into production

- AWS CloudFormation: You can implement your infrastructure as code. It enables you to have consistent and templated sandbox environments for development, test, and production.

- Data on the use of resources, application programming interfaces, and network flow logs can be collected using Amazon CloudWatch, AWS CloudTrail, and Amazon Virtual Private Cloud or Amazon VPC-Flow Logs.

- Operate It's measured by the achievement of business and customer outcomes.

- Key service:

- Amazon CloudWatch allows you to monitor the operational health of a workload. You can generate dashboard views of your metrics.

- Evolve It's required to sustain operational excellence.

- Key service:

- Amazon Elasticsearch allows you to analyze your log data to gain actionable insights quickly and securely.

- AWS developer tools (AWS CodeStart, AWS CodeCommit, AWS CodeBuild, AWS CodeDeploy): You can implement build, test, and deployment activities for continuous delivery. You can use the results of deployment activities to identify opportunities for improving both deployment and development.

Design principles:

- Perform operations with code

- There are many benefits of using code: Automate in response to events, less human errors, etc

- Make frequent, small, reversible changes

- Refine standard operations procedures frequently

- Learn from all operational failures

- Annotate documentation

- Anticipate failure

Anti-Patterns of the operational pillar:

- Manual changes: mistake can happen

- Technology metrics

- Batch changes

- No time to learn from mistakes: if a mistake is made, always take the time to understand what went wrong, to make sure it doesn't happen again

- Stale documentation

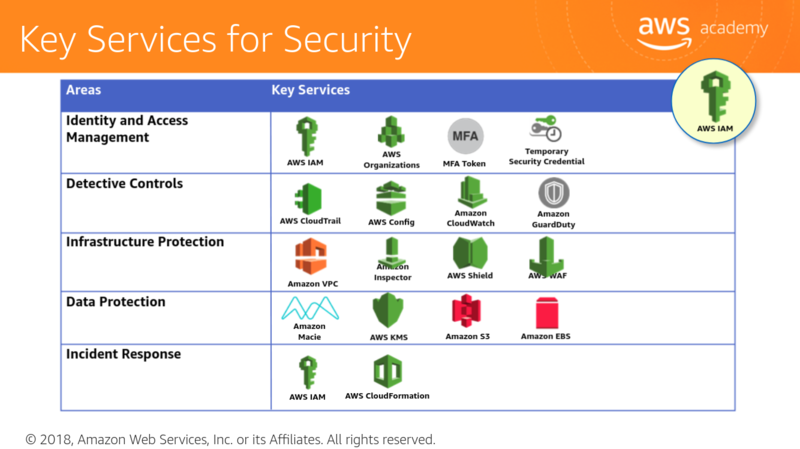

Module 10 - Well-Architected Pillar 2 - Security

Slides 4-32, 44-66, 74-93

The ability to protect information, systems, and assets while delivering business value through risk assessments and mitigation strategies.

The goal of the security pillar is to keep the impact of any type of failure to the smallest possible area. By preparing your system for the worst, you can implement a variety of mitigation strategies for the different components of your infrastructure and applications.

Mitigations strategies include:

- Identity and access management

- Detective controls

- Infrastructure protection

- Data protection

- Incident response

Design principles:

- Implement a strong identity foundation: You should use the principle of least privilege. You should also enforce the separation of duties, and make sure that you grand appropriate authorization.

- Enable traceability with monitoring, using alerts, and auditing actions or changes to your environment in real-time.

- Apply security at all layers: For example, you can implement security controls on every virtual server, load balancer, and network subnet.

- Automate security best practices

- Protect data in transit and at rest

- Prepare for security events

Key Services for Security

| Areas | Key Services |

|---|---|

| Identity and Access Management | AWS IAM,

AWS Organizations, MFA Token, Temporary Security Credential |

| Detective Controls | AWS CloudTrail: It records application programming interface, or API, calls to AWS services.

AWS Config: It provides a detailed inventory of your AWS resources and configurations Amazon CloudWatch: It's a monitoring service for AWS resources. Amazon GuardDuty: It's an intelligent threat detection service that provides customers with an accurate way to continuously monitor and protect their AWS accounts and workloads. This service analyzes billions of events across your AWS accounts. |

| Infrastructure protection | Amazon VPC,

Amazon Inspector:

AWS Shield: It is a managed Distributed Denial of Service (DDoS) protection service that safeguards applications running on AWS.

AWS WAF (Web Application Firewall): It helps protect your web applications or APIs against common web exploits that may affect availability, compromise security, or consume excessive resources. |

| Data protection | Elastic Load Balancing (ELB), Amazon Elastic Amazon Block Store (EBS), Amazon S3, Amazon Relational Database Service (Amazon RDS): These services provids encryption capabilities that protect your data in transit and at rest.

CloudFront Custom SSL Support: By default, your content is delivered to viewers over HTTPS by using CloudFront distribution domain name such as:

How to make content private:

Amazon Macie automatically discovers, classifies, and protects sensitive data AWS Key Management (AWS KMS) This is a managed encryption service that enables you to easily encrypt your data. It can be used to create and control keys that are used for encryption. AWS Cloud Hardware Security Module (AWS cloudHSM) AWS CloudHSM is a cloud-based hardware security module (HSM) that enables you to easily generate and use your own encryption keys on the AWS Cloud. It helps you to meet corporate, contractual, and regulatory compliance requirements for data security by using dedicated hardware security module appliances within the AWS Cloud. The CloudHSM appliance helps you to comply with strict cryptographic key management requirements. Data at rest with Amazon S3:

|

| Incident response | AWS IAM: It should be used to grand the appropriate authorization to incident response teams.

AWS CloudFormation can be used to create a trusted environment for conducting investigations. |

Exercise - How to improve an architecture

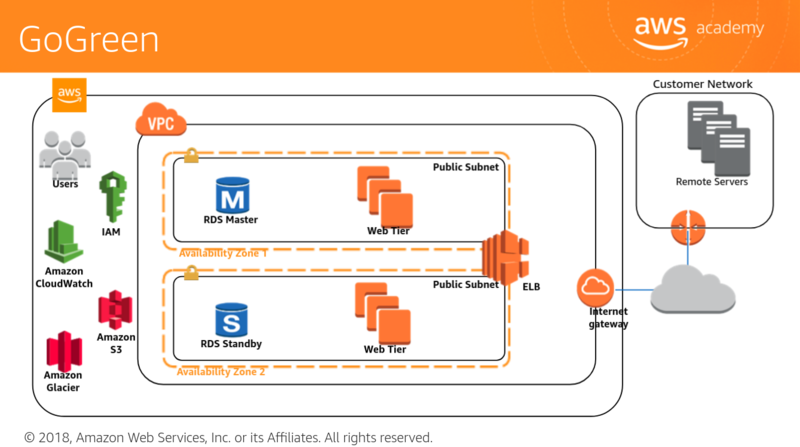

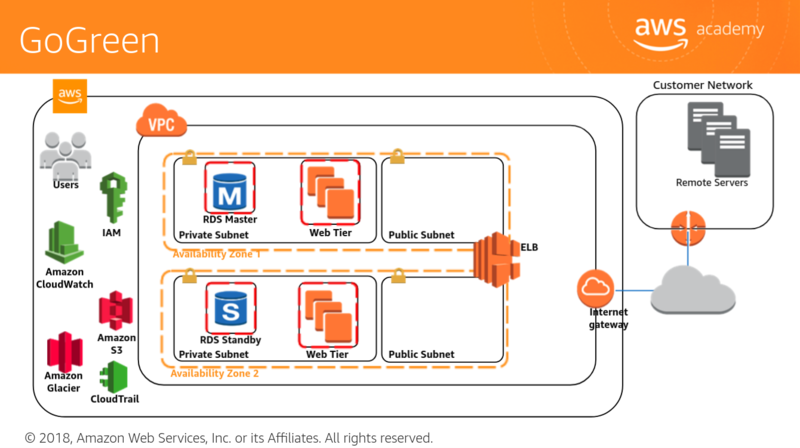

- GoGreen purchased a small company that was running a hybrid cloud architecture.

- As their cloud expert, you were asked to review their design and determine how to improve the application in accordance with the Well-Architected Framework.

- Next, you will review a sample diagram and ask questions to identify where improvements can be made to the security of the system.

- You should consider the recommended security enhancements that you would make in accordance with the Security pillar of the Well-Architected Framework.

Take some time to review this sample diagram and ask questions that will help you identify where improvements can be made to the security of the system.

A few items to consider regarding security include:

- Moving to a private subnet.

- Implementing AWS Direct Connect instead of using internet routing.

- Verify the principles of least privilege on users.

- Ensure separation of duties through IAM.

- Ensure that Amazon CloudWatch has monitors for utilization, abnormal traffic, and AWS CloudTrail Logs.

- Enable AWS CloudTrail.

- Verify access control list policies and bucket policies for Amazon S3.

- Verify Amazon S3-SSE usage.

- Ensure users do not have delete privileges for Amazon Glacier.

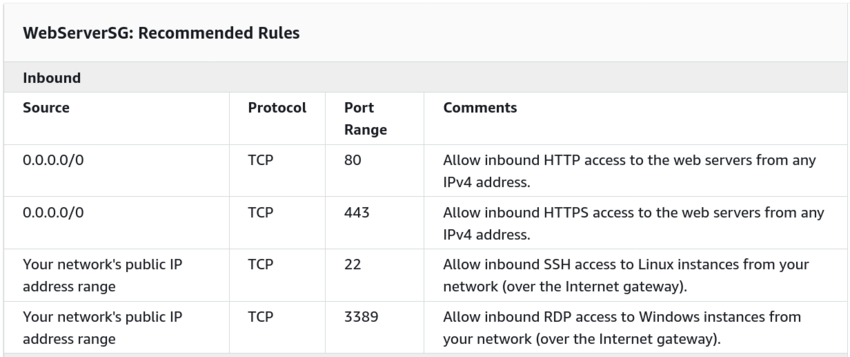

- Consider using security groups for the web and Amazon RDS.

- And ensure that SSH requests come from only the administrator IP address.

Some security pillar questions

- How are you encrypting and protecting your data at rest?

- Use AWS service-specific controls, such as:

- Amazon S3 SSE

- Amazon EBS Encrypted Volumes

- Amazon RDS Transparent Data Encryption (TDE)

- Use client-side techniques, such as:

- SDK-supported

- OS-supported

- Windows BitLocker

- dm-crypt

- Use solution from the AWS Marketplace or APN Partner

- How are you encrypting and protecting your data in transit?

- SSL/TLS enabled AWS APIs

- SSL/TLS or equivalent is used for communication

- VPN based solution

- Private connectivity (AWS Direct Connect).

- AWS Marketplace solution

- How are you protecting access to and use of the AWS root account credentials?

- Only use AWS root account credentials for minimal required activities

- Associate an MFA hardware device with the AWS root account.

- Use an AWS Marketplace solution

- How are you defining roles and responsibilities of system users to control human access to the AWS Management Console and API?

- IAM users and groups

- SAML integration

- Web Identity Federation

- AWS Security Token Service (STS)

- IAM roles for cross-account access

- AWS Marketplace solution

- Define and enforce employees life-cycle policies

- Clearly define users, groups, and roles

- Grants only the minimum privileges needed to accomplish business requirements.

- How are you managing keys and credentials?

- Anti-pattern

- Hard-code secret keys and credentials into scripts and source code

- Best practices:

- Use:

- An appropriate key and credential rotation policy

- AWS CloudHSM

- AWS server-side techniques with AWS managed keys

- AWS Marketplace solutions

- How are you limiting automated access to AWS resources (e.g. applications, scripts, and third-party tools or services)?

- Anti-pattern:

- Hard-code the credential into scripts and source code

- Best practices:

- IAM roles for Amazon EC2

- IAM user credential

- SAML Integration

- AWS Security Token Services (STS)

- OS-specific controls for EC2 instances

- AWS Marketplace solutions

- How are you enforcing network and host-level boundary protection?

- Enforce role-based access using security groups with minimal authorizations

- Run the system in one or more VMCs

- Trusted VPC access via a private mechanism, such as:

- Virtual private network (VMN)

- IPsec tunnel

- AWS Direct Connect

- AWS Marketplace solution

- Define and enforce employee lif-cycle policies

- Clearly define users, groups, and roles

- Grants only the minimum privileges needed to accomplish business requirements,

- How are you enforcing AWS service level protection?

- Configure credential with least privilege.

- Have separation of duties

- Audit permissions periodically

- Define and use service-specific requirements.

- Define resources requirements for sensitive API calls, such as requiring:

- MFA authentication

- Encryption

- Use an AWS Marketplace solution

- How are you protecting the integrity of the operating system on your Amazon EC2 instances?

- Use controls for Amazon EC2 instances:

- File integrity

- Host-based intrusion detection

- Use a solution from:

- The AWS Marketplace

- An APN partner

- Use custom Amazon Machine Images (AMIs) or configurations management tools (i.e., Puppet or Chef) that are secured by default.

- Hoa are you capturing and analyzing AWS logs?

- Capture logs using:

- AWS CloudTril

- Amazon CloudWatch logs

- Elastic Load Balancing (ELB) logs

- Amazon Virtual Private Cloud (VPC) flow logs

- Amazon S3 bucket logs

- Other AWS service-specific log sources

- Operating system or third-party application logs

- Solutions from the AWS Marketplace

Additional resources

- Documentation

- Whitepapers

- Videos

Module 11 - Well-Architected Pillar 3 - Reliability

The ability of a system to recover from infrastructure or service disruptions, dynamically acquire computing resources to meet demand and mitigate disruptions such as misconfigurations or transient network issues.

The goal of reliability is to keep the impact of any type of failure to the smallest area possible. By preparing your system for the worst, you can implement a variety of mitigation strategies for the different components of your infrastructure and applications.

Reliability focuses on the ability to recover from failures and meet demand in three areas:

- Foundations: Foundational elements that include setup and cross-projects requirements.

- Change management: How you manage change

- Failure management: Recovering from failure by using failure management.

Design principles:

- Test recovery procedures

- Automatically recover from failure

- Scale horizontally to increase aggregate system availability

- Stop guessing capacity

- Manage change in automation

Key services for reliability:

| Areas | Key services | |

|---|---|---|

| Foundations | Amazon CloudWatch: This is the key service to ensuring reliability. It gives you a holistic view of the performance of your workload | AWS IAM: Enables you to securely control access to AWS services and resources

|

| Change management | AWS CloudTrail AWS CloudTrail is a service that enables governance, compliance, operational auditing, and risk auditing of your AWS account. With CloudTrail, you can log, continuously monitor, and retain account activity related to actions across your AWS infrastructure.

| |

| Failure management | AWS CloudFormation provides templates for the creation of AWS resources and provisions them in an orderly and predictable fashion

| |

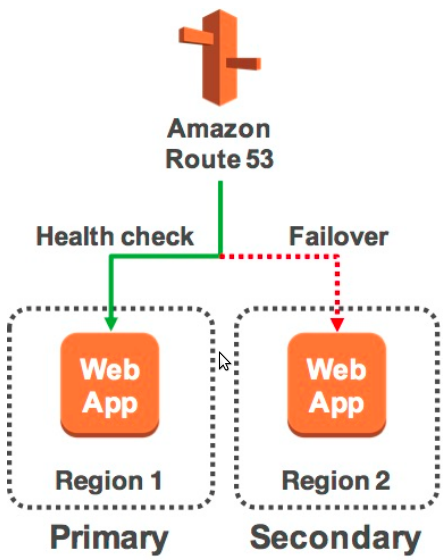

Making your infrastructure more reliable - Disaster recovery on AWS

Cloud_Computing#Disaster Recovery

Example architectural patterns for Disaster Recovery on AWS:

- Backup and Restore

- Pilot Light

- Fully working Low-Capacity Standby

- Multi-Site Active-Active

Backup and Restore

Advantages:

- Simple to get started

- Extremely cost-effective (mostly backup storage).

In case of disaster:

- Retrieve backups from Amazon S3

- Boot up required infrastructure

- Amazon EC2 instances with prepared AMIs, Elastic Load Balancing, etc

- Use AWS CloudFormationto automate the deployment of core networking

- Restore system from backup

- Switch over to the new system

- Adjust DNS records to point to AWS

Objectives:

- RTO: as long as it takes to bring up infrastructure and restore the system from backups.

- RPO: time since last backup.

Pilot Light

Advantages:

- Very const-effective (Uses fewer 24/7 resources).

In case of disaster:

- Automatically bring up resources around the replicated core data set.

- Scale the system as needed to handle current production traffic.

- Switch over to the new system

- Adjust DNS records to point to AWS

Objectives:

- RTO: as long as it takes to detect the need for DR and automatically scale up replacement system

- RPO: Depends on replication type

Fully working Low-Capacity Standby

Advantages:

- Can take some production traffic at any time

- Cost savings (IT footprint smaller than full disaster recovery)

In case of disaster:

- Immediately fail ver most critical production load

- Adjust DNS records to pint to AWS

- Auto Scale the system further to handle all production load

Objectives:

- RTO: for critical load: as long as it takes to failover; for all other loads, as long as it takes to scale further.

- RPO: Depends on replication type

Multi-Site Active-Active

Advantages:

- Can take all production load at any moment.

In case of disaster:

- Immediately fail over all production load.

Objectives:

- RTO: as long as it takes to failover.

- RPO: depends on replication type.

Reliability pillar questions

- How are you managing AWS service limits for your account?:

- Monitor and manage limits by evaluating your potential usage on AWS, increase your regional limits appropriately, and allow planned growth in usage.

- Set up automated monitoring by implementing tools such as SDKs to alert you when thresholds are being approached

- Be aware of fixed service limits that are unchangeable and architect around those

- How are you planning your network topology on AWS?:

- Use highly available connectivity to AWS with

- Multiple AWS Direct connect circuits

- Multiple VPN tunnels

- AWS Marketplace appliances

- Size your IP subnet allocation to be large enough to accommodate future expansion

- Use highly available connectivity to the system with:

- Highly available load balancing and/or proxy

- DNS-based solutions

- AWS Marketplace appliances

- Use non-overlapping private IP ranges between all of your environments in and out of the cloud.

- Do you have an escalation path to deal with technical issues?:

- Plan ahead with an ongoing engagement or relationship with AWS support or an APN partner

- Use AWS Support APIs by integrating them with your internal monitoring and ticketing systems.

- How woes your system adapt to changes in demand?:

- Use automated scaling features from services such as:

- Amazon S3

- Amazon CloudFront

- Auto Scaling

- Amazon DynamoDB

- AWS Elastic Beanstalk

- Adopt a load testing methodology to measure if the scaling activity will meet your application requirements

- How are you monitoring AWS resources?:

- Monitor your application switch:

- Amazon CloudWatch

- Third-party tools

- Configure notifications for when significant events occur.

- Use automation to take action when failure is detected

- Perform frequent reviews of the system based on significant events to evaluate the architecture

- How are you executing change management? :

- Perform automated change management for deployments and patching

- How are you backing up your data?:

- Back up data that is important with a required RPO using:

- Amazon S3

- Amazon EBS snapshots

- Third-party software

- Secure and/or encrypt backups

- Automate backups using:

- AWS features

- AWS Marketplace solutions

- Third-party software

- Validate that the backup process implementation meets RTO and RPO through periodic recovery testing.

- How are you planning for recovery?:

- Define RTO and RPO objectives

- Establish a disaster recovery strategy

- Avoid configuration drift by ensuring that AMIs are up-to-date at the DR site or region.

- Request an increase of service limits with the DR site to accommodate failover.

- Regularly test and validate disaster recovery scenarios to ensure RTO and RPO are met.

- Automate system recovery using AWS and/or third-party tools.

Module 12 - Well-Architected Pillar 4 - Performance Efficiency

The ability to use computing resources efficiently to meet system requirements, and to maintain that efficiency as demand changes and technologies evolve.

- Selection: the right resource type for computing, storage, databases, and netorking.

- Review: your selections using benchmarking and load test to validate your system.

- Monitoring: to know when your performance degrades, so you can take actions before it impacts customers

- Make architectural Tradeoffs to maximize performance efficiency. For example, you might use a cache to improve performance and reduce the load on your database.

Design principles:

- Democratize advanced technologies: Technologies that are difficult to implement can become easier to consume by pushing the knowledge and complexity into the cloud vendor's domain. Rather than having your iT team learn how to host and run a new technology, they can consume it as service. For example, NoSQL databases and machine learning are technologies that require expertise. In the cloud, these technologies become services that your team can consume while focusing on product development rather than resource provisioning and management.

- Go global in minutes: You can easily deploy your system in multiple locations around the world with just a few clicks.

- The cloud also enables you to use serverless architectures such as AWS Lambda, which removes the need for you to run and maintain servers to process traditional compute activities. For example, storage services can act as static websites, removing the need for web servers, and event services can host code for you.

- With the cloud, you can experiment more often: With virtual and automatable resources, you can quickly carry out comparative testing using different types of instances, storage, and configurations.

- You can achieve mechanical sympathy: In the could, you can use the technology approach that aligns best with what your are treating to achieve. For instance, if you are accustomed to using a SQL database solution, you might experiment and discover NoSQL is a better solution.

Key services for performance efficiency:

| Areas | Key services

Amazon CloudWatch is the one of the most important |

|---|---|

| Selection | The selection area encompasses compute, storage, database, and networ services:

For storage, Amazon EBS provides a wide range of storage options, such as solid state drives, or SSDs Amazon S3 provides serverless content delivery.

Amazon DynamoDB provides siingle-digit millisecond latency at any scale.

Amazon VPC endpoints and AWS Direct Connect can reduce network distance and jitter. |

| Review | AWS Blog and What's New section on the AWS website are resources for learning about newly launched features and services. |

| Monitoring | Amazon CloudWatch

AWS Lambda You can use CloudWatch with AWS Lambda to trigger actions. |

| Tradeoffs | Amazon CloudFront, Amazon ElastiCache, and AWS Snowball are services that allow you to improve performance. In addition, rad replicas in Amazon RDS can help you scale read-heavy workloads. |

Infrastructure efficiency impovements

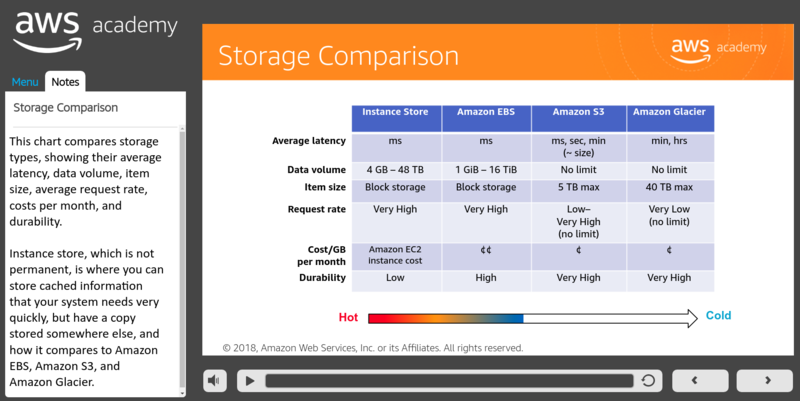

Storage Comparation:

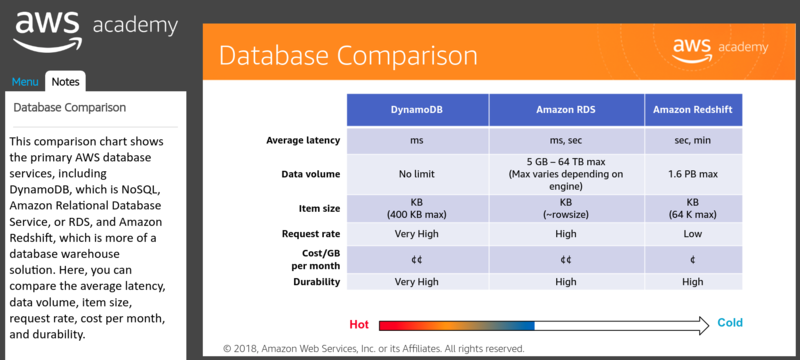

Database Comparation:

Amazon ElastiCache: is a web service that simplifies deploying, operating, and scaling an in-memory cache in the clod.

- Improves the performance of web applications by allowing you to retrieve information from fast, managed, in-memory caches, instead of relying entirely on slower disk-based databases.

- Simplifies and offloads the management, monitoring, and operation of in-memory cache environments.

- Support Memcached and Redis open-source in-memory caching engines.

Amazon Kinesis Streams: enables you to build custom applications that process or analyze streaming data. You can continuously capture and store terabytes of data per hour from hundreds of thousands of sources.

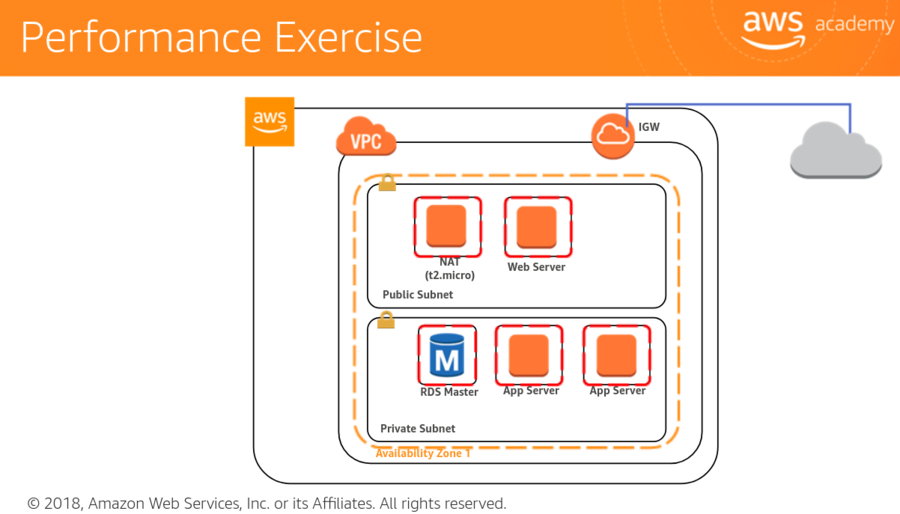

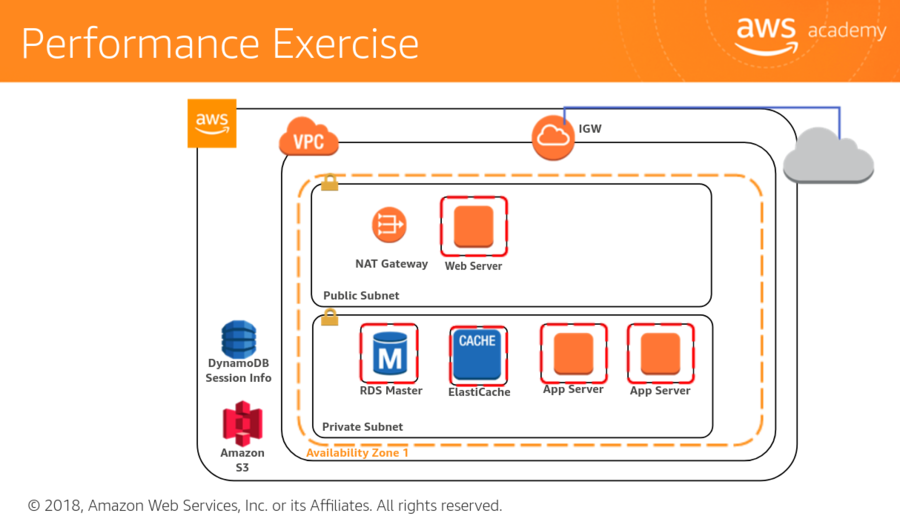

Performance efficiency exercise

Your small business website has been experiencing intermittent page load issues where users report significant load times. Consider performance efficiency enhancement in accordance with the well-architected performance efficiency pillar.

- Is your NAT (t2.micro) the bottleneck? consider a bigger EC2 instance or use a managed NAT

- Should you consider Elastic Load Balancing and Auto Scaling groups?

- By offloading static content to S3, can you relieve the processing burden from the EC2 instances?

- Are your computer resources the right combination of CPU and memory?

- Is the page load issue due to throughput or database latency? Should you consider provisioned IOPS for your RDS instance to get consistent performance? Should you consider offloading some of the databases reads to a dedicated read-replica?

- What's the geographic location and latency form the region to the user? Have you considered using a content distribution network, like CloudFront, to bring the content closer to your users?

Performance questions

- How do you select the appropriate instance type, storage solution, database solution, and proximity and caching solution for your system?:

- Select solutions based on:

- Predicted resource needs

- Required internal governance standards

- Cost or budget

- Benchmarking results

- Load testing results

- Guidance from AWS or form a member of the AWS partner network (APN)

- How do you ensure that you continue to have the most appropriate instance types, storage solution, database solutions, and proximity and coaching solution as new services and features are launched?:

- Review cyclically, and reselect new services and features based on predicted resource needs.

- Benchmark and load test after each new service or feature is released, and use that information to make the best selection based on a calculation of performance/cost.

- How do yo monitor your instances, storage solutions, databases, and proximity and caching solutions to ensure they are performing as expected?:

- Monitor instance with

- Amazon CloudWatch

- Third-party tools

- Perform a periodic review of your monitoring dashboards.

- Use alarm-based notification to automatically alert you if metrics are out of safe bounds (CloudWatch).

- Use trigger-based actions to cause automated actions to remediate or escalate issues.

- How do you ensure that the capacity and throughput of your instances, storage solutions, databases and proximity and caching solution match demand?:

- React based on manually reviewing metrics

- Plan future capacity and throughput based on metrics and/or planned events.

- Automate against metrics.

- Perform a periodic review of cache usage and demand over time.

- Monitor usage and demand over time

- Use the following for automatic management:

- Scripting and tools

- Auto Scaling

Module 13 - Well-Architected Pillar 5 - Cost Optimization

The ability to run systems to deliver business value atthe lowest price point.

Vocareum labs

ACA Module 3 LAB - Making Your Environment Highly Available

https://labs.vocareum.com/main/main.php

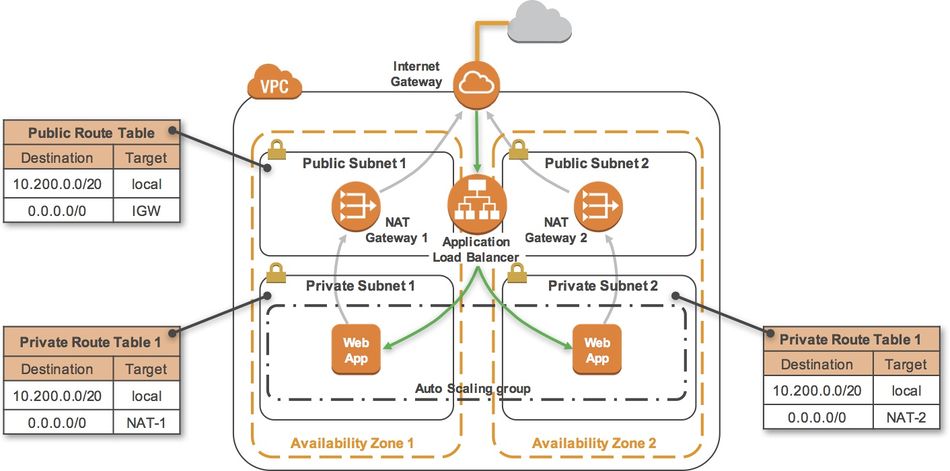

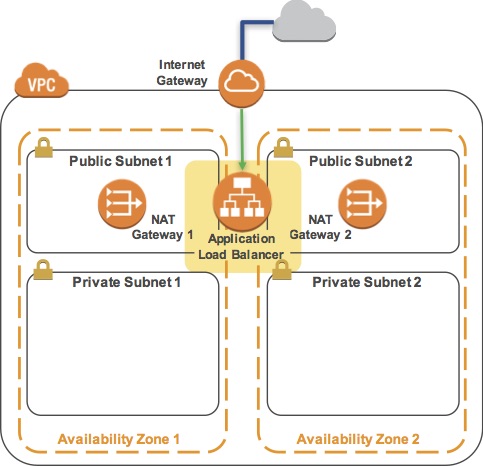

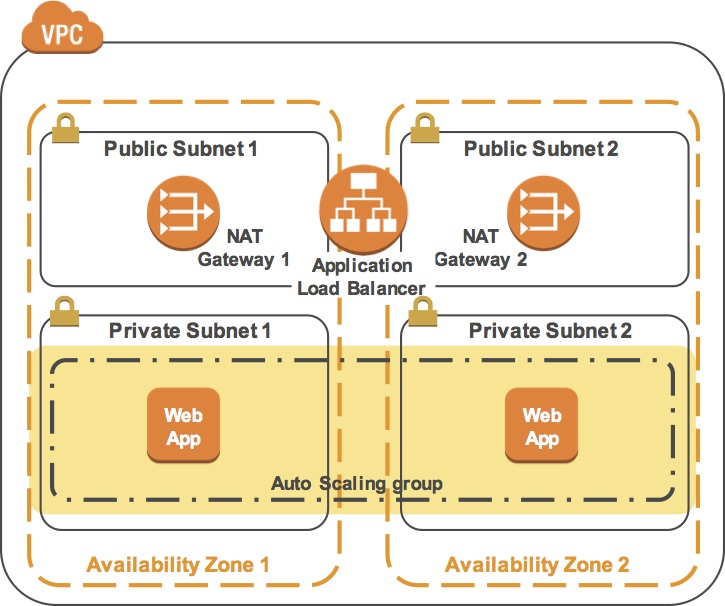

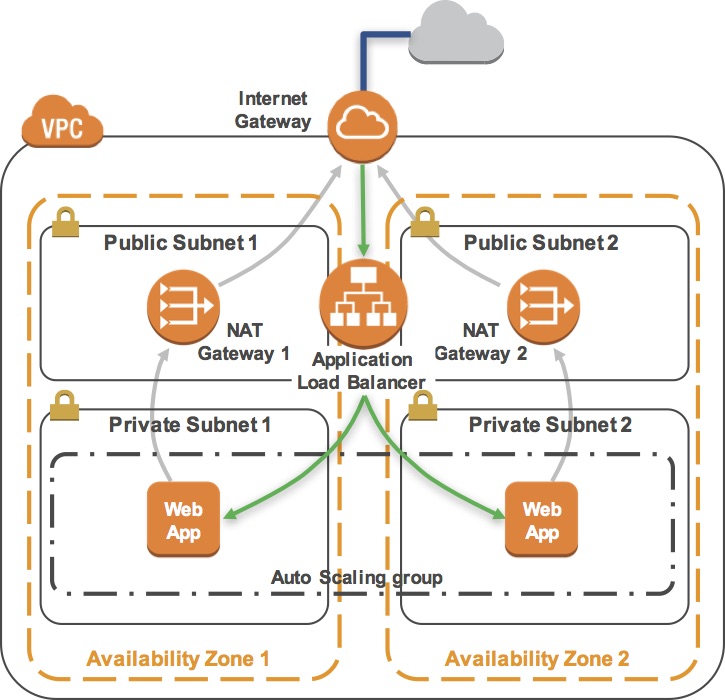

Critical business systems should be deployed as Highly Available applications, meaning that they can remain operational even when some components fail. To achieve High Availability in AWS, it is recommended to run services across multiple Availability Zones.

Many AWS services are inherently highly available, such as Load Balancers, or can be configured for high availability, such as deploying Amazon EC2 instances in multiple Availability Zones.

In this lab, you will start with an application running on a single Amazon EC2 instance and will then convert it to be Highly Available.

Objectives:

After completing this lab, you will be able to:

- Create an image of an existing Amazon EC2 instance and use it to launch new instances.

- Expand an Amazon VPC to additional Availability Zones.

- Create VPC Subnets and Route Tables.

- Create an AWS NAT Gateway.

- Create a Load Balancer.

- Create an Auto Scaling group.

The final product of your lab will be this:

Task 1 - Inspect Your environment

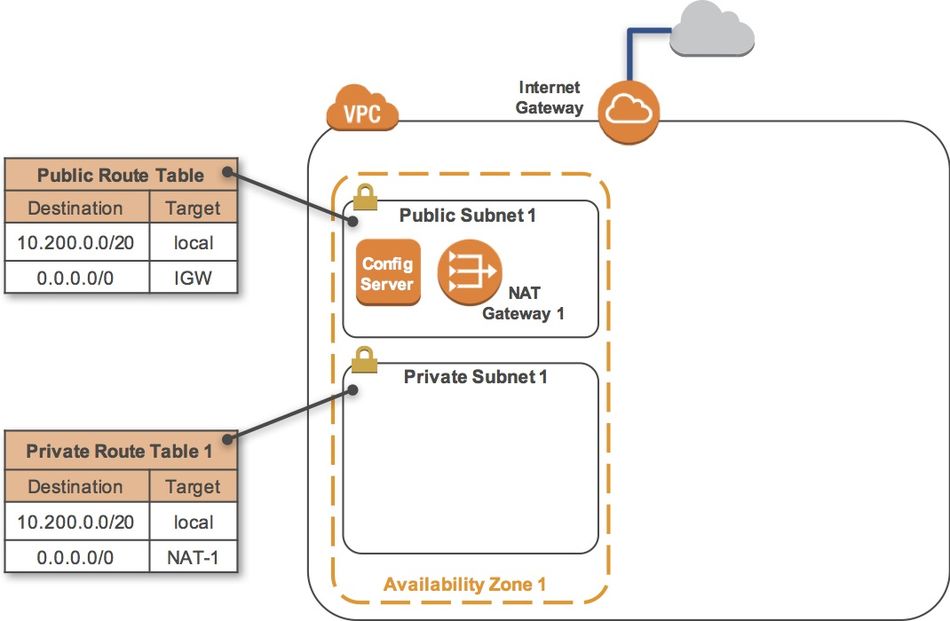

This lab begins with an environment already deployed via AWS CloudFormation including:

- An Amazon VPC

- A public subnet and a private subnet in one Availability Zone

- An Internet Gateway associated with the public subnet

- A NAT Gateway in the public subnet

- An Amazon EC2 instance in the public subnet

Task 1-1 - Inspect Your VPC

- On the AWS Management Console, on the Services menu, click VPC.

- In the left navigation pane, click Your VPCs.

- Here you can see the Lab VPC that has been created for you.

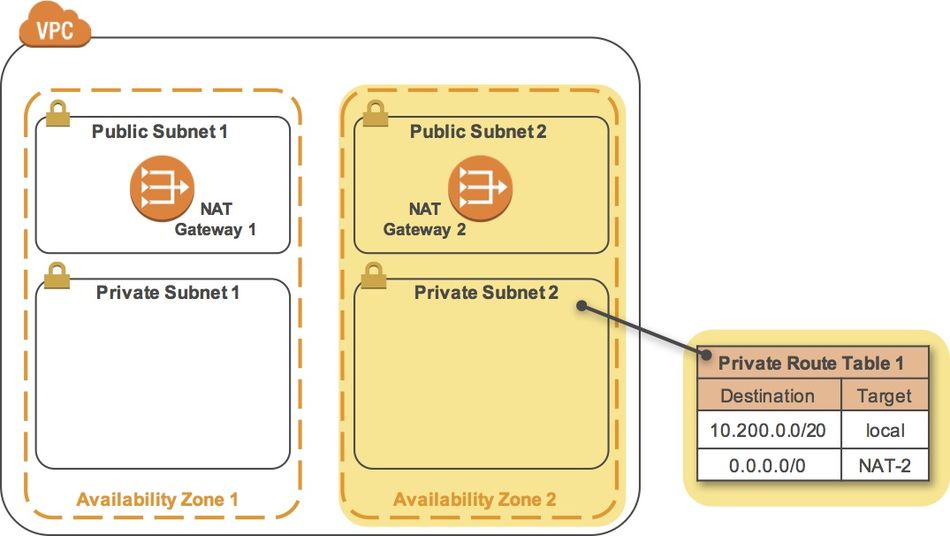

- IP range: In the IPv4 CIDR column, you can see a value of 10.200.0.0/20, which means this VPC includes 4,096 IPs between 10.200.0.0 and 10.200.15.255 (with some reserved and unusable).

- It is also attached to a Route Table and a Network ACL.

- This VPC also has a Tenancy of default, instances launched into this VPC will by default use shared tenancy hardware.

- Subnets: In the navigation pane, click Subnets.

- Here you can see the Public Subnet 1 subnet:

- In the VPC column, you can see that this subnet exists inside of Lab VPC

- IP range: In the IPv4 CIDR column, you can see a value of 10.200.0.0/24, which means this subnet includes the 256 IPs (5 of which are reserved and unusable) between 10.200.0.0 and 10.200.0.255.

- Availability Zone: In the Availability Zone column, you can see the Availability Zone in which this subnet resides.

- Click on the row containing Public Subnet 1 to reveal more details at the bottom of the page.

- Route Table: Click the Route Table tab in the lower half of the window.

- Here you can see details about the Routing for this subnet:

- Local traffic: The first entry specifies that traffic destined within the VPC's CIDR range (10.200.0.0/20) will be routed within the VPC (local).

- Internet Gateway: The second entry specifies that any traffic destined for the Internet (0.0.0.0/0) is routed to the Internet Gateway (igw-). This setting makes it a Public Subnet.

- In the left navigation pane, click Internet Gateways.

- Notice that an Internet Gateway is already associated with Lab VPC.

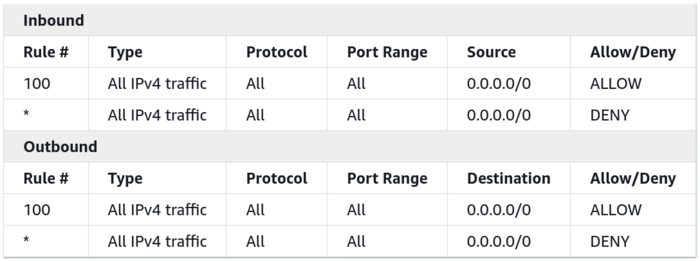

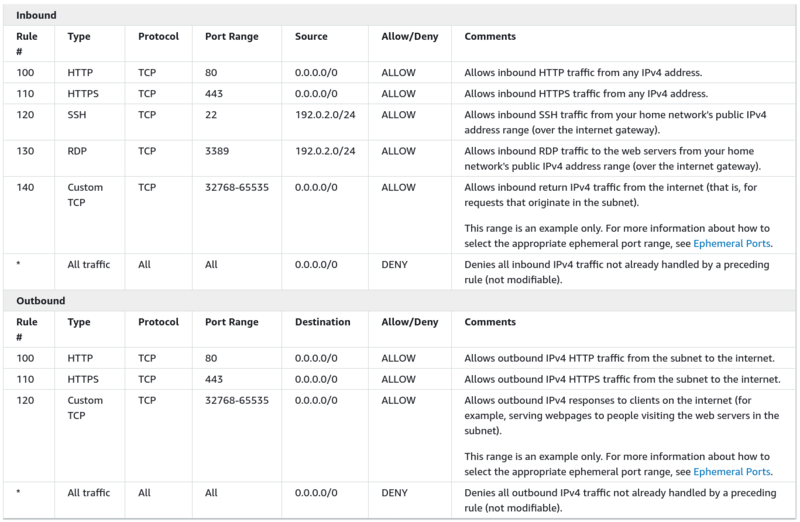

- ACL: Click the Network ACL tab in the lower half of the window.

- Here you can see the Network Access Control List (ACL) associated with the subnet. The rules currently permit ALL Traffic to flow in and out of the subnet, but they can be further restricted by using Security Groups.