Difference between revisions of "Página de pruebas"

Adelo Vieira (talk | contribs) (Blanked the page) (Tag: Blanking) |

Adelo Vieira (talk | contribs) |

||

| Line 1: | Line 1: | ||

| + | ==Decision Trees== | ||

| + | * 10/06: Recorded class - Decision tree | ||

| + | :* https://drive.google.com/drive/folders/1TW494XF-laGGJiLFApz8bJfMstG4Yqk_ | ||

| + | |||

| + | <br /> | ||

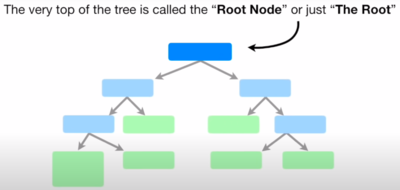

| + | * '''What is a Decision Tree''' | ||

| + | |||

| + | [[File:Decision_Trees_terminology1.png|400px|thumb|right|]] | ||

| + | |||

| + | [[File:Decision_Trees_terminology2.png|400px|thumb|right|]] | ||

| + | |||

| + | [[File:Decision_Trees_terminology3.png|400px|thumb|right|]] | ||

| + | |||

| + | : In general terms, we can say that "A decision tree is a flowchart-like structure in which each internal node represents a "test" on an attribute (e.g. whether a coin flip comes up heads or tails), each branch represents the outcome of the test, and each leaf node represents a class label (decision taken after computing all attributes). The paths from root to leaf represent classification rules". https://en.wikipedia.org/wiki/Decision_tree | ||

| + | |||

| + | |||

| + | : In a ML context, we can say that DT is A predictive model or a ML method based on a series of branching Boolean tests, splitting the data into smaller and smaller subsets to identify patterns that can be used for prediction. The model is a series of logical decisions on an attribute (tests for which the answer is true or false) [Noel Cosgrave slides] | ||

| + | |||

| + | |||

| + | <br /> | ||

| + | * '''How Decision Trees Work''' | ||

| + | :* Decision tree learners build models in the form of tree structures. | ||

| + | |||

| + | :* The model is a series of logical decisions on an attribute (tests for which the answer is true or false) | ||

| + | |||

| + | :* Decision nodes split the data using these tests | ||

| + | |||

| + | :* Leaf nodes assign a predicted class based on a combination of the decisions in higher nodes | ||

| + | |||

| + | :* Data travels through the tree from root to leaf nodes | ||

| + | |||

| + | |||

| + | <br /> | ||

| + | * '''A decision rule''' is the path from the root node to the leaf node | ||

| + | :* Each rule is mutually exclusive | ||

| + | :* Every row (or observation) in the data is covered by a single rule | ||

| + | :* Covering a data observation means that the observation satisfies the conditions of the rule | ||

| + | |||

| + | |||

| + | <br /> | ||

| + | * '''A dataset can have many possible decision trees''' | ||

| + | : In practice, we want small & accurate trees | ||

| + | |||

| + | |||

| + | :* What is the inductive bias in Decision Tree learning? | ||

| + | ::* Shorter trees are preferred over longer trees: Smaller trees are more general, usually more accurate, and easier to understand by humans (and to communicate!) | ||

| + | |||

| + | ::* Prefer trees that place high information gain attributes close to the root | ||

| + | |||

| + | |||

| + | :* More succinct hypotheses are preferred. Why? | ||

| + | ::* There are fewer succinct hypotheses than complex ones | ||

| + | ::* If a succinct hypothesis explains the data this is unlikely to be a coincidence | ||

| + | ::* However, not every succinct hypothesis is a reasonable one. | ||

| + | |||

| + | |||

| + | <br /> | ||

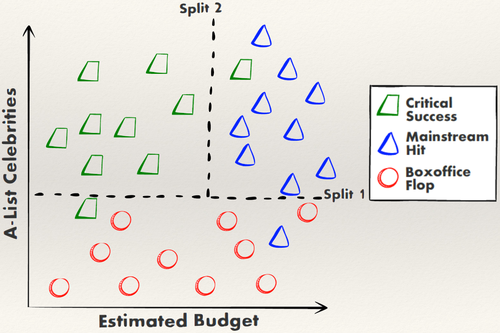

| + | '''Example 1:''' | ||

| + | |||

| + | The model for predicting the future success of a movie can be represented as a simple tree: | ||

| + | |||

| + | {| | ||

| + | | | ||

| + | {| class="wikitable" | ||

| + | !Movie | ||

| + | !Number of celebrities | ||

| + | !Budget | ||

| + | !Success (True label) | ||

| + | !Success (Predicted label) | ||

| + | |- style="background-color:#ff794d" | ||

| + | |Movie 1 | ||

| + | |low | ||

| + | |hight | ||

| + | |boxoffice flop | ||

| + | |boxoffice flop | ||

| + | |- style="background-color:#ff794d" | ||

| + | |Movie 2 | ||

| + | |low | ||

| + | |low | ||

| + | |boxoffice flop | ||

| + | |boxoffice flop | ||

| + | |- style="background-color:#ff794d" | ||

| + | |Movie 3 | ||

| + | |low | ||

| + | |hight | ||

| + | |boxoffice flop | ||

| + | |boxoffice flop | ||

| + | |- style="background-color:#ff794d" | ||

| + | |Movie 4 | ||

| + | |low | ||

| + | |low | ||

| + | |boxoffice flop | ||

| + | |boxoffice flop | ||

| + | |- style="background-color:#ff794d" | ||

| + | |Movie 5 | ||

| + | |low | ||

| + | |hight | ||

| + | |boxoffice flop | ||

| + | |boxoffice flop | ||

| + | |- style="background-color:#ff794d" | ||

| + | |Movie 6 | ||

| + | |low | ||

| + | |low | ||

| + | |boxoffice flop | ||

| + | |boxoffice flop | ||

| + | |- style="background-color:#ff794d" | ||

| + | |Movie 7 | ||

| + | |low | ||

| + | |hight | ||

| + | |boxoffice flop | ||

| + | |boxoffice flop | ||

| + | |- style="background-color:#ff794d" | ||

| + | |Movie 8 | ||

| + | |low | ||

| + | |low | ||

| + | |boxoffice flop | ||

| + | |boxoffice flop | ||

| + | |- style="background-color:#ff794d" | ||

| + | |Movie 9 | ||

| + | |low | ||

| + | |hight | ||

| + | |boxoffice flop | ||

| + | |boxoffice flop | ||

| + | |- style="background-color:#ff794d" | ||

| + | |Movie 10 | ||

| + | |low | ||

| + | |low | ||

| + | |boxoffice flop | ||

| + | |boxoffice flop | ||

| + | |- style="background-color:#66a3ff" | ||

| + | |Movie 11 | ||

| + | |hight | ||

| + | |hight | ||

| + | |mainstream hit | ||

| + | |mainstream hit | ||

| + | |- style="background-color:#66a3ff" | ||

| + | |Movie 12 | ||

| + | |hight | ||

| + | |hight | ||

| + | |mainstream hit | ||

| + | |mainstream hit | ||

| + | |- style="background-color:#66a3ff" | ||

| + | |Movie 13 | ||

| + | |hight | ||

| + | |hight | ||

| + | |mainstream hit | ||

| + | |mainstream hit | ||

| + | |- style="background-color:#66a3ff" | ||

| + | |'''Movie 14''' | ||

| + | |'''low''' | ||

| + | |'''hight''' | ||

| + | |'''mainstream hit''' | ||

| + | |style="background-color:#ff794d"|'''boxoffice flop''' | ||

| + | |- style="background-color:#66a3ff" | ||

| + | |Movie 15 | ||

| + | |hight | ||

| + | |hight | ||

| + | |mainstream hit | ||

| + | |mainstream hit | ||

| + | |- style="background-color:#66a3ff" | ||

| + | |Movie 16 | ||

| + | |hight | ||

| + | |hight | ||

| + | |mainstream hit | ||

| + | |mainstream hit | ||

| + | |- style="background-color:#66a3ff" | ||

| + | |Movie 17 | ||

| + | |hight | ||

| + | |hight | ||

| + | |mainstream hit | ||

| + | |mainstream hit | ||

| + | |- style="background-color:#66a3ff" | ||

| + | |Movie 18 | ||

| + | |hight | ||

| + | |hight | ||

| + | |mainstream hit | ||

| + | |mainstream hit | ||

| + | |- style="background-color:#66a3ff" | ||

| + | |Movie 19 | ||

| + | |hight | ||

| + | |hight | ||

| + | |mainstream hit | ||

| + | |mainstream hit | ||

| + | |- style="background-color:#66a3ff" | ||

| + | |Movie 20 | ||

| + | |hight | ||

| + | |hight | ||

| + | |mainstream hit | ||

| + | |mainstream hit | ||

| + | |- style="background-color:#00e6b0" | ||

| + | |Movie 21 | ||

| + | |hight | ||

| + | |low | ||

| + | |critical success | ||

| + | |critical success | ||

| + | |- style="background-color:#00e6b0" | ||

| + | |Movie 22 | ||

| + | |hight | ||

| + | |low | ||

| + | |critical success | ||

| + | |critical success | ||

| + | |- style="background-color:#00e6b0" | ||

| + | |Movie 23 | ||

| + | |hight | ||

| + | |low | ||

| + | |critical success | ||

| + | |critical success | ||

| + | |- style="background-color:#00e6b0" | ||

| + | |'''Movie 24''' | ||

| + | |'''low''' | ||

| + | |'''hight''' | ||

| + | |'''critical success''' | ||

| + | |style="background-color:#ff794d"|'''boxoffice flop''' | ||

| + | |- style="background-color:#00e6b0" | ||

| + | |Movie 25 | ||

| + | |hight | ||

| + | |low | ||

| + | |critical success | ||

| + | |critical success | ||

| + | |- style="background-color:#00e6b0" | ||

| + | |'''Movie 26''' | ||

| + | |'''hight''' | ||

| + | |'''hight''' | ||

| + | |'''critical success''' | ||

| + | |style="background-color:#66a3ff"|'''mainstream hit''' | ||

| + | |- style="background-color:#00e6b0" | ||

| + | |Movie 27 | ||

| + | |hight | ||

| + | |low | ||

| + | |critical success | ||

| + | |critical success | ||

| + | |- style="background-color:#00e6b0" | ||

| + | |Movie 28 | ||

| + | |hight | ||

| + | |low | ||

| + | |critical success | ||

| + | |critical success | ||

| + | |- style="background-color:#00e6b0" | ||

| + | |Movie 29 | ||

| + | |hight | ||

| + | |low | ||

| + | |critical success | ||

| + | |critical success | ||

| + | |- style="background-color:#00e6b0" | ||

| + | |Movie 30 | ||

| + | |hight | ||

| + | |low | ||

| + | |critical success | ||

| + | |critical success | ||

| + | |} | ||

| + | | | ||

| + | [[File:DecisionTree-NoelCosgraveSlides_2.png|500px|thumb|center|]] | ||

| + | |||

| + | [[File:DecisionTree-NoelCosgraveSlides_1.png|500px|thumb|center|]] | ||

| + | |} | ||

| + | |||

| + | |||

| + | |||

| + | <br /> | ||

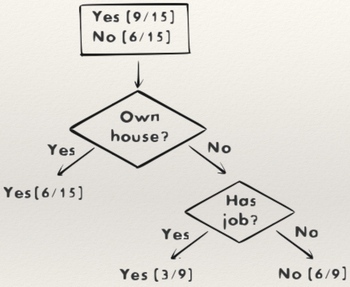

| + | '''Example 2:''' | ||

| + | |||

| + | This decision tree is used to decide whether or not to provide a loan to a customer | ||

| + | |||

| + | {| | ||

| + | |[[File:DecisionTree-NoelCosgraveSlides_3.png|350px|thumb|center|]] | ||

| + | | | ||

| + | * <math>15</math> training examples | ||

| + | * <math>Yes</math> = approval, <math>No</math> = rejection | ||

| + | * The <math>x/y</math> values mean that <math>x</math> out of <math>y</math> training examples that reach this leaf node has the class of the leaf. This is the confidence | ||

| + | * The <math>x</math> value is the support count | ||

| + | * <math>x/total</math> training examples is the support | ||

| + | |} | ||

| + | |||

| + | |||

| + | <br /> | ||

| + | ===The algorithm=== | ||

| + | This is a basic but nice explanation of the algorithm. | ||

| + | |||

| + | <center> | ||

| + | <div style="width: 420pt;">{{#ev:youtube|https://www.youtube.com/watch?v=7VeUPuFGJHk|||||start=110}} | ||

| + | </div> | ||

| + | </center> | ||

| + | |||

| + | The algorithm described above is something like this: | ||

| + | |||

| + | * '''We first need to determine which will be the Root:''' | ||

| + | |||

| + | :* The attribute (Chest pain, Good blood circulation, Blocked arteries) that determines better whether a Patient has '''Heart Disease''' or not will be chosen as the Root. | ||

| + | |||

| + | :* To do so, we need to evaluate the three attributes by calculating what is known as '''Impurity''', which is a measure of how bad the Attribute is able to separate (determine) our label attribute (Heart Disease in our case). | ||

| + | |||

| + | :*There are many ways to measure Impurity, one of the popular ones is to calculate the '''Gini''' impurity. | ||

| + | |||

| + | |||

| + | :* '''So, let's calculate the Gini impurity for our «Chest pain» attribute:''' | ||

| + | |||

| + | ::* We look at chest pain for all 303 patients in our data: | ||

| + | |||

| + | [[File:Decision_trees-StatQuest1.png|600px|thumb|center|Taken from https://www.youtube.com/watch?v=7VeUPuFGJHk]] | ||

| + | |||

| + | |||

| + | ::* We calculate the Gini impurity for each branch (True: The patient has chest pain | False: The patient does not have chest pain): | ||

| + | |||

| + | :::<div style='font-size:15pt'><math>\color{blue}{ | ||

| + | Gini\ Impurity = 1 - \sum_{i=1}^{N} P_{i}^{2} | ||

| + | } | ||

| + | </math> | ||

| + | </div> | ||

| + | |||

| + | |||

| + | ::* For the '''True''' branch: | ||

| + | |||

| + | :::<math> | ||

| + | Gini\ Impurity_{True} = 1 - (Probability\ of\ Yes)^{2} + (Probability\ of\ No)^{2} | ||

| + | </math> | ||

| + | |||

| + | :::<math> | ||

| + | Gini\ Impurity_{True} = 1 - \biggl(\frac{105}{105 + 39}\biggl)^2 - \biggl(\frac{39}{105 + 39}\biggl)^2 = 0.395 | ||

| + | </math> | ||

| + | |||

| + | |||

| + | ::* For the '''False''' branch: | ||

| + | |||

| + | :::<math> | ||

| + | Gini\ Impurity_{False} = 0.336 | ||

| + | </math> | ||

| + | |||

| + | |||

| + | ::*<div style='font-size:13pt'><math>\color{blue}{ | ||

| + | Total\ Gini\ Impurity = Weighted\ average\ of\ Gini\ impurities\ for\ the\ leaf\ nodes\ (branches)} | ||

| + | </math></div> | ||

| + | |||

| + | :::<math> | ||

| + | Gini\ Impurity_{Chest pain} = \biggl( \frac{144}{144 + 159} \biggl)0.395\ +\ \biggl( \frac{159}{144 + 159} \biggl)0.336 = 0.364 | ||

| + | </math> | ||

| + | |||

| + | |||

| + | :* '''Good Blood Circulation''' | ||

| + | ::<math> | ||

| + | Gini\ Impurity_{Good\ Blood\ Circulation} = 0.360 | ||

| + | </math> | ||

| + | |||

| + | |||

| + | :* '''Blocked Arteries''' | ||

| + | ::<math> | ||

| + | Gini\ Impurity_{Blocked\ Arteries} = 0.381 | ||

| + | </math> | ||

| + | |||

| + | |||

| + | [[File:Decision_trees-StatQuest2.png|600px|thumb|center|Taken from https://www.youtube.com/watch?v=7VeUPuFGJHk]] | ||

| + | |||

| + | |||

| + | * '''Then, we follow the same method to determine the following nodes''' | ||

| + | |||

| + | |||

| + | * '''A node becomes a leaf when the Gini impurity for a node before is lower than the Gini impurity after using a new attribute''' | ||

| + | |||

| + | [[File:Decision_trees-StatQuest3.png|600px|thumb|center|Taken from https://www.youtube.com/watch?v=7VeUPuFGJHk]] | ||

| + | |||

| + | |||

| + | * '''At the end, our DT looks like this:''' | ||

| + | |||

| + | [[File:Decision_trees-StatQuest4.png|600px|thumb|center|Taken from https://www.youtube.com/watch?v=7VeUPuFGJHk]] | ||

| + | |||

| + | |||

| + | |||

| + | * '''For numeric data:''' | ||

| + | : Ex.: Patient weight | ||

| + | |||

| + | |||

| + | * '''Ranked data and Multiple choice data:''' | ||

| + | : Ranked data: For example, "Rank my jokes on a scale of 1 to 4". | ||

| + | : Multiple choice data: For example, "Which color do you like: red, blue or green". | ||

| + | |||

| + | |||

| + | |||

| + | <br /> <br /> | ||

| + | Decision Trees, Part 2 - Feature Selection and Missing Data: https://www.youtube.com/watch?v=wpNl-JwwplA | ||

| + | |||

| + | |||

| + | <br /> | ||

| + | Regression Trees: https://www.youtube.com/watch?v=g9c66TUylZ4 | ||

| + | |||

| + | |||

| + | <br /> | ||

| + | ====The ID3 algorithm==== | ||

| + | ID3 (Quinlan, 1986) is an early algorithm for learning Decision Trees. The learning of the tree is top-down. The algorithm is greedy, it looks at a single attribute and gain in each step. This may fail when a combination of attributes is needed to improve the purity of a node. | ||

| + | |||

| + | |||

| + | At each split, the question is "which attribute should be tested next? Which logical test gives us more information?". This is determined by the measures of '''entropy''' and '''information gain'''. These are discussed later. | ||

| + | |||

| + | |||

| + | A new decision node is then created for each outcome of the test and examples are partitioned according to this value. The process is repeated for each new node until all the examples are classified correctly or there are no attributes left. | ||

| + | |||

| + | |||

| + | <br /> | ||

| + | ====The C5.0 algorithm==== | ||

| + | C5.0 (Quinlan, 1993) is a refinement of C4.5 which in itself improved upon ID3. '''It is the industry standard for producing decision trees'''. It performs well for most problems out-of-the-box. Unlike other machine-learning techniques, it has high explanatory power, it can be understood and explained. | ||

| + | |||

| + | . | ||

| + | |||

| + | . | ||

| + | |||

| + | . | ||

| + | |||

| + | |||

| + | <br /> | ||

| + | |||

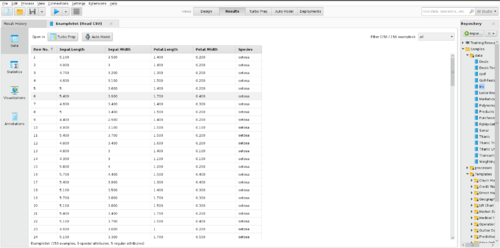

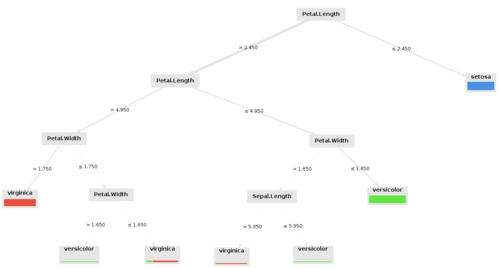

| + | ===Example in RapidMiner=== | ||

| + | <div style="text-align: center;"> | ||

| + | |||

| + | {| style="margin: 0 auto;" | | ||

| + | |[[File:decision_tree_RapidMiner_example-Iris_data3.png|300px|thumb|center|]] | ||

| + | |[[File:decision_tree_RapidMiner_example-Iris_data2.png|500px|thumb|center|]] | ||

| + | |- | ||

| + | |<img style='width:300px' src='https://upload.wikimedia.org/wikipedia/commons/7/78/Petal-sepal.jpg' /> | ||

| + | |[[File:decision_tree_RapidMiner_example-Iris_data1.png|500px|thumb|center|]] | ||

| + | |} | ||

| + | |||

| + | |||

| + | [[File:Decision_tree_RapidMiner_example-Iris_data.mp4|800px|thumb|center|]] | ||

| + | |||

| + | |||

| + | <pdf width="1500" height="600">File:Decision_tree_Example_RapidMiner.pdf</pdf> | ||

| + | [[File:Decision_tree_Example_RapidMiner.pdf]] | ||

| + | </div> | ||

| + | |||

| + | |||

| + | <br /> | ||

Revision as of 11:11, 18 January 2021

Contents

Decision Trees

- 10/06: Recorded class - Decision tree

- What is a Decision Tree

- In general terms, we can say that "A decision tree is a flowchart-like structure in which each internal node represents a "test" on an attribute (e.g. whether a coin flip comes up heads or tails), each branch represents the outcome of the test, and each leaf node represents a class label (decision taken after computing all attributes). The paths from root to leaf represent classification rules". https://en.wikipedia.org/wiki/Decision_tree

- In a ML context, we can say that DT is A predictive model or a ML method based on a series of branching Boolean tests, splitting the data into smaller and smaller subsets to identify patterns that can be used for prediction. The model is a series of logical decisions on an attribute (tests for which the answer is true or false) [Noel Cosgrave slides]

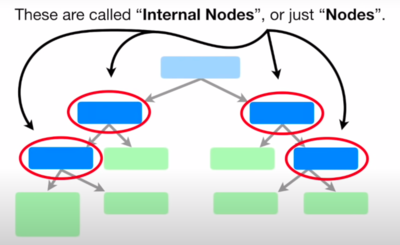

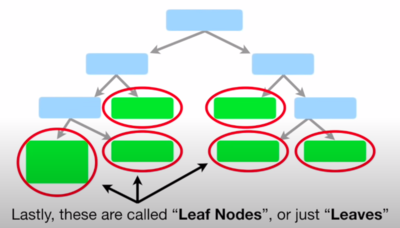

- How Decision Trees Work

- Decision tree learners build models in the form of tree structures.

- The model is a series of logical decisions on an attribute (tests for which the answer is true or false)

- Decision nodes split the data using these tests

- Leaf nodes assign a predicted class based on a combination of the decisions in higher nodes

- Data travels through the tree from root to leaf nodes

- A decision rule is the path from the root node to the leaf node

- Each rule is mutually exclusive

- Every row (or observation) in the data is covered by a single rule

- Covering a data observation means that the observation satisfies the conditions of the rule

- A dataset can have many possible decision trees

- In practice, we want small & accurate trees

- What is the inductive bias in Decision Tree learning?

- Shorter trees are preferred over longer trees: Smaller trees are more general, usually more accurate, and easier to understand by humans (and to communicate!)

- Prefer trees that place high information gain attributes close to the root

- More succinct hypotheses are preferred. Why?

- There are fewer succinct hypotheses than complex ones

- If a succinct hypothesis explains the data this is unlikely to be a coincidence

- However, not every succinct hypothesis is a reasonable one.

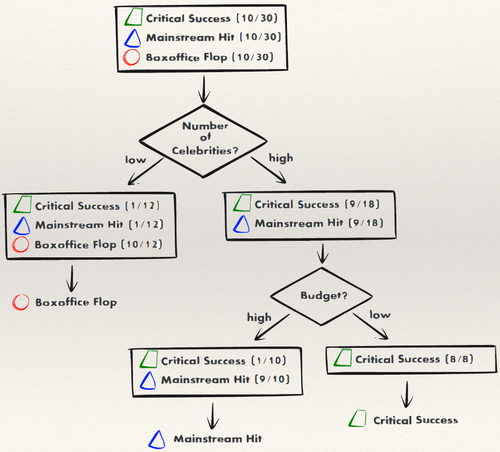

Example 1:

The model for predicting the future success of a movie can be represented as a simple tree:

|

Example 2:

This decision tree is used to decide whether or not to provide a loan to a customer

|

The algorithm

This is a basic but nice explanation of the algorithm.

The algorithm described above is something like this:

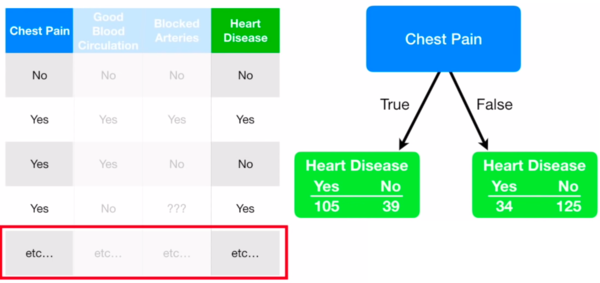

- We first need to determine which will be the Root:

- The attribute (Chest pain, Good blood circulation, Blocked arteries) that determines better whether a Patient has Heart Disease or not will be chosen as the Root.

- To do so, we need to evaluate the three attributes by calculating what is known as Impurity, which is a measure of how bad the Attribute is able to separate (determine) our label attribute (Heart Disease in our case).

- There are many ways to measure Impurity, one of the popular ones is to calculate the Gini impurity.

- So, let's calculate the Gini impurity for our «Chest pain» attribute:

- We look at chest pain for all 303 patients in our data:

- We calculate the Gini impurity for each branch (True: The patient has chest pain | False: The patient does not have chest pain):

- For the True branch:

- For the False branch:

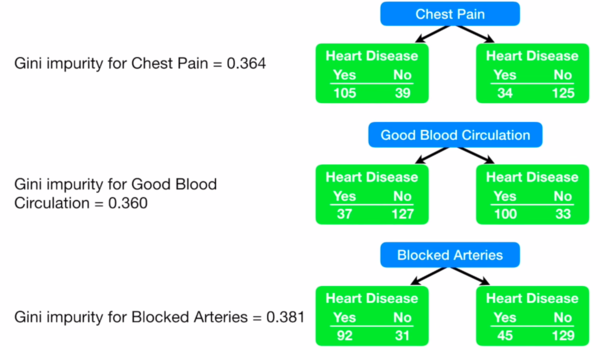

- Good Blood Circulation

- Blocked Arteries

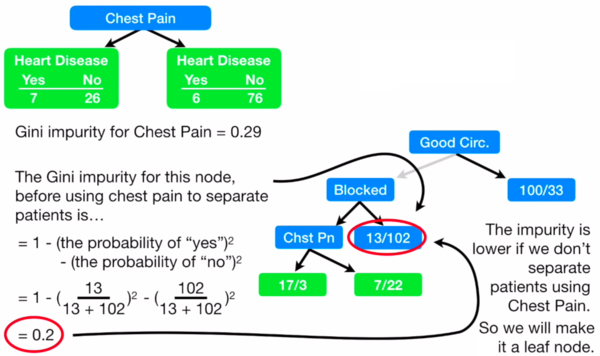

- Then, we follow the same method to determine the following nodes

- A node becomes a leaf when the Gini impurity for a node before is lower than the Gini impurity after using a new attribute

- At the end, our DT looks like this:

- For numeric data:

- Ex.: Patient weight

- Ranked data and Multiple choice data:

- Ranked data: For example, "Rank my jokes on a scale of 1 to 4".

- Multiple choice data: For example, "Which color do you like: red, blue or green".

Decision Trees, Part 2 - Feature Selection and Missing Data: https://www.youtube.com/watch?v=wpNl-JwwplA

Regression Trees: https://www.youtube.com/watch?v=g9c66TUylZ4

The ID3 algorithm

ID3 (Quinlan, 1986) is an early algorithm for learning Decision Trees. The learning of the tree is top-down. The algorithm is greedy, it looks at a single attribute and gain in each step. This may fail when a combination of attributes is needed to improve the purity of a node.

At each split, the question is "which attribute should be tested next? Which logical test gives us more information?". This is determined by the measures of entropy and information gain. These are discussed later.

A new decision node is then created for each outcome of the test and examples are partitioned according to this value. The process is repeated for each new node until all the examples are classified correctly or there are no attributes left.

The C5.0 algorithm

C5.0 (Quinlan, 1993) is a refinement of C4.5 which in itself improved upon ID3. It is the industry standard for producing decision trees. It performs well for most problems out-of-the-box. Unlike other machine-learning techniques, it has high explanatory power, it can be understood and explained.

.

.

.

Example in RapidMiner